Web scraping in PHP means fetching web pages over HTTP and extracting structured data from their HTML using DOM parsing libraries. PHP handles this well: it has a built-in cURL extension, mature libraries like Guzzle and Symfony DomCrawler, and deploys easily on most servers.

PHP is considered less popular for scraping than Python, but PHP 8.3+ handles async operations, has proper typing, and runs anywhere Composer works. If you already use PHP, you can build scrapers without switching languages.

This guide covers raw HTTP fetching, headless browser automation, and commercial API integration. It starts with native cURL for simple requests, then moves to Guzzle for async and session work, DomCrawler for HTML parsing, and Symfony Panther for JavaScript rendering. Each section shows working code you can adapt.

Best PHP Libraries for Web Scraping in 2026

PHP has libraries for every scraping task. Here are the ones that work in production.

| Library | Category | Install | Best For | Skip When |

|---|---|---|---|---|

| cURL (native) | HTTP client | Built-in | Simple scripts, no Composer | Concurrent requests needed |

| Guzzle 7.x | HTTP client | composer require guzzlehttp/guzzle | Async, middleware, production | Quick one-off scripts |

| Symfony HTTP Client | HTTP client | Included in Symfony | HTTP/2, Symfony projects | No Symfony in the stack |

| Requests for PHP | HTTP client | composer require rmccue/requests | Lightweight scripts | Middleware or async needed |

| Symfony DomCrawler | HTML parser | composer require symfony/dom-crawler | CSS selectors, XPath, production | Speed is the only concern |

| DiDOM | HTML parser | composer require imangazaliev/didom | Large documents, fast parsing | Need XPath power features |

| voku/simple_html_dom | HTML parser | composer require voku/simple_html_dom | Simple scripts, familiar API | High-volume loops |

| RoachPHP | Framework | composer require roach-php/core | Pipelines, Laravel projects | Single-page scraping |

| Spatie Crawler | Framework | composer require spatie/crawler | Recursive crawling, robots.txt | Not crawling whole sites |

| Symfony Panther | Headless browser | composer require symfony/panther | JS rendering, full interactions | Static HTML pages |

| Spatie Browsershot | Headless browser | composer require spatie/browsershot | Screenshots, HTML snapshots | No Node.js on server |

| chrome-php/chrome | Headless browser | composer require chrome-php/chrome | DevTools Protocol, no Node.js | Multiple concurrent browsers |

| php-webdriver | Headless browser | composer require php-webdriver/webdriver | Manual Selenium control | Simple wait-and-extract |

Pick one HTTP client, one parser, add a headless browser only when the site needs it. That covers most scraping work.

HTTP Clients

Guzzle remains the standard. It handles async requests, connection pooling, and middleware. Use versions 7.4.5+ to avoid CVE-2022-31042 (header leaks on redirects).

Symfony HTTP Client works well if you already use Symfony components. Optimized for HTTP/2 multiplexing, which means multiple requests over one TCP connection.

Requests for PHP has a simple API similar to Python’s requests library. Used in WordPress core. Good for lightweight scripts.

cURL is PHP’s built-in HTTP extension. No install required. Every server running PHP almost certainly has it. Good for simple fetch scripts where adding a library is not worth the overhead.

HTML Parsing

Symfony DomCrawler handles CSS selectors and XPath. Works with valid or broken HTML. Pair it with symfony/css-selector for CSS queries.

composer require symfony/dom-crawler symfony/css-selectorDiDOM parses faster than Simple HTML DOM on large documents. Uses DOMDocument under the hood with a jQuery-like API. Actively maintained.

voku/simple_html_dom is a maintained fork of the original Simple HTML DOM. The original parser had memory leaks and stopped updates in 2019. This fork fixes those issues and supports PHP 8+.

Crawling Frameworks

RoachPHP brings Scrapy architecture to PHP. Spiders, item pipelines, and middleware for data extraction workflows. Laravel adapter available. Requires PHP 8.2+.

Spatie Crawler does recursive site crawling with Guzzle async requests. Respects robots.txt, handles delays, filters URLs. PHP 8.4+ required.

Headless Browsers

Symfony Panther controls Chrome or Firefox via WebDriver. Renders JavaScript, executes complex scenarios. Heavy on resources but handles single-page apps.

Spatie Browsershot wraps Puppeteer for screenshots and HTML after JS execution. Requires Node.js and Puppeteer installed. Faster than Panther for static HTML snapshots.

php-webdriver gives low-level control over Selenium WebDriver. Use when you need manual control over every browser action.

chrome-php/chrome talks directly to Chrome via DevTools Protocol. No Node.js required. Good middle ground between Panther and Browsershot.

Libraries to Avoid

Goutte (deprecated 2023). The creator moved functionality into Symfony BrowserKit. Migrate to Symfony\Component\BrowserKit\HttpBrowser for the same API with active support.

Simple HTML DOM Parser (original). Memory leaks in continuous loops. The parser holds circular references that PHP garbage collection can’t clear. Use the voku fork instead.

PHPScraper by theultrasoft (archived 2023). Problems with modern JS and CSS.

PHP-Spider (mvdbos). Last commits in 2022. Use Spatie Crawler or RoachPHP.

cURL for PHP Scraping

cURL ships with PHP. If PHP is already running on the server, cURL is almost certainly available too. For scraping it covers the essentials. It fetches pages, submits login forms, and keeps session cookies alive between requests. For quick one-off scripts, it is often the fastest way to get something working.

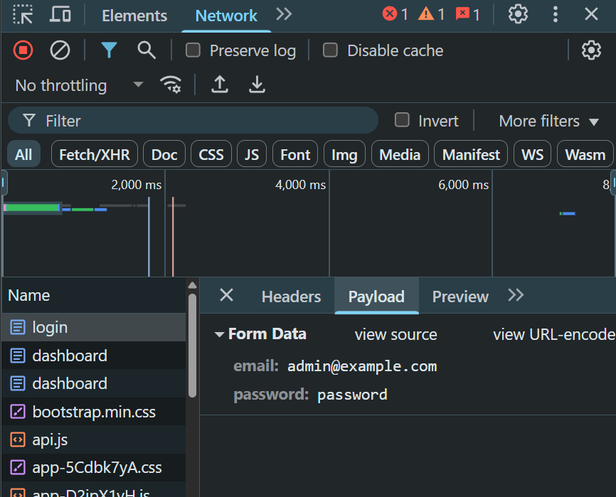

Our target is a test site designed for scraping practice. This site uses username and password in a POST request. No CSRF tokens, no JavaScript challenges. Open DevTools and watch the Network tab during login to see just two fields being sent.

Fetching a Page

The simplest scraping request is a GET with browser-like headers. Without headers most sites respond differently to cURL than to a real browser, sometimes returning a stripped version of the page or blocking the request entirely.

<?php

$ch = curl_init('https://www.scrapingcourse.com/dashboard');

curl_setopt_array($ch, [

CURLOPT_RETURNTRANSFER => true,

CURLOPT_FOLLOWLOCATION => true,

CURLOPT_TIMEOUT => 15,

CURLOPT_HTTPHEADER => [

'User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36',

'Accept-Language: en-US,en;q=0.9',

],

]);

$html = curl_exec($ch);

$error = curl_error($ch);

curl_close($ch);

if ($error) {

echo "Failed: {$error}\n";

exit(1);

}

echo strlen($html) . " bytes\n";CURLOPT_RETURNTRANSFER is the setting people miss most often. Without it cURL prints the response body straight to stdout and returns true. Set it and the response comes back as a string.

Logging In

Most login forms send credentials as a POST with URL-encoded fields.

<?php

$cookieFile = '/tmp/session.txt';

$ch = curl_init('https://www.scrapingcourse.com/login');

curl_setopt_array($ch, [

CURLOPT_RETURNTRANSFER => true,

CURLOPT_POST => true,

CURLOPT_POSTFIELDS => http_build_query([

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD'),

]),

CURLOPT_COOKIEJAR => $cookieFile,

CURLOPT_COOKIEFILE => $cookieFile,

]);

curl_exec($ch);

curl_close($ch);CURLOPT_COOKIEJAR writes the cookies the server sends back into a file. CURLOPT_COOKIEFILE reads from that file and attaches the cookies to outgoing requests. Set both and subsequent requests carry the session automatically.

Scraping Behind a Login

After login the session lives in the cookie file. Pass that file to every request that needs authentication and cURL handles the rest.

<?php

$cookieFile = '/tmp/session.txt'; // same file from the login step

$ch = curl_init('https://www.scrapingcourse.com/dashboard');

curl_setopt_array($ch, [

CURLOPT_RETURNTRANSFER => true,

CURLOPT_COOKIEFILE => $cookieFile,

CURLOPT_COOKIEJAR => $cookieFile,

]);

$html = curl_exec($ch);

$error = curl_error($ch);

curl_close($ch);

if ($error) {

echo "Dashboard request failed: {$error}\n";

exit(1);

}

// parse $html hereThe cookie file persists between script runs as long as the server session stays alive. Only run the login step again when the file is missing or the session has expired.

cURL vs Guzzle

cURL handles sequential scraping well. When the script needs multiple requests running at the same time, Guzzle becomes the better choice.

| Situation | Use |

|---|---|

| Quick script, no Composer | cURL |

| Need to control every byte of the request | cURL |

| cURL extension already in use | cURL |

| Working in an existing Guzzle project | Guzzle |

| Need async or concurrent requests | Guzzle |

| Need middleware or retry logic | Guzzle |

Guzzle runs on top of cURL anyway, so nothing gets replaced. It just adds async support, a cleaner API, and a middleware chain on top of what cURL already does.

HTML Fetching and Session Management

Guzzle builds on cURL and adds a cookie jar, middleware, and async support. Install it first:

composer require guzzlehttp/guzzleTo see the difference, the target here is the same dashboard that requires login and session persistence across requests.

Single Request Login

The obvious approach sends credentials in one POST request.

<?php

use GuzzleHttp\Client;

$client = new Client();

$response = $client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => [

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD')

]

]);

echo $response->getStatusCode(); // 200This logs you in, but the session cookie disappears immediately. Try accessing the dashboard next:

$response = $client->request('GET', 'https://www.scrapingcourse.com/dashboard');

// Redirects to login page - no active sessionThe server sends a session cookie in the login response, but Guzzle doesn’t store it anywhere. Each request starts fresh with no authentication.

Cookie Jar for Session Persistence

A cookie jar captures and reuses session cookies automatically across requests.

<?php

use GuzzleHttp\Client;

use GuzzleHttp\Cookie\CookieJar;

$jar = new CookieJar();

$client = new Client(['cookies' => $jar]);

// Login - cookie jar captures session cookie

$client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => [

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD')

]

]);

// Session cookie sent automatically

$response = $client->request('GET', 'https://www.scrapingcourse.com/dashboard');

$html = $response->getBody()->getContents();The cookie jar intercepts the Set-Cookie header from the login response and includes that cookie in every subsequent request to the same domain. No manual cookie handling required.

Form-Based Login with HttpBrowser

For sites with complex forms, Symfony HttpBrowser provides automatic form handling.

<?php

require 'vendor/autoload.php';

use Symfony\Component\BrowserKit\HttpBrowser;

use Symfony\Component\HttpClient\HttpClient;

$browser = new HttpBrowser(HttpClient::create());

$crawler = $browser->request('GET', 'https://www.scrapingcourse.com/login');

// Find and populate the form

$form = $crawler->selectButton('Login')->form([

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD'),

]);

// Submit and follow redirect

$crawler = $browser->submit($form);

// Extract data from dashboard

$products = $crawler->filter('.product-item')->each(function ($node) {

return [

'name' => $node->filter('.product-name')->text(),

'price' => $node->filter('.product-price')->text(),

];

});HttpBrowser handles cookies, redirects, and form submissions automatically. It simulates a real browser session without the overhead of running actual Chrome or Firefox.

Saving Sessions Between Runs

For scrapers that run periodically (cron jobs, scheduled tasks), save cookies to disk to skip re-authentication.

<?php

use GuzzleHttp\Cookie\FileCookieJar;

$jar = new FileCookieJar('/tmp/scraper_session.json', true);

$client = new Client(['cookies' => $jar]);

// Check if session exists

if (!file_exists('/tmp/scraper_session.json') || filesize('/tmp/scraper_session.json') === 0) {

// First run - login and save cookies

$client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => [

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD')

]

]);

}

// Session loaded from file automatically

$response = $client->request('GET', 'https://www.scrapingcourse.com/dashboard');FileCookieJar writes cookies to JSON format. The session persists between script executions as long as the server-side session hasn’t expired (usually 15-30 minutes for most sites).

Watch out for session expiration though. If the server invalidates your session, the scraper will access login-protected pages without authentication and get redirected. Add a check for this:

<?php

$response = $client->request('GET', 'https://www.scrapingcourse.com/dashboard', [

'allow_redirects' => ['track_redirects' => true],

]);

// Check if we landed back on the login page (session expired)

$redirectHistory = $response->getHeader('X-Guzzle-Redirect-History');

$finalUrl = !empty($redirectHistory) ? end($redirectHistory) : '';

if (str_contains($finalUrl, '/login') || str_contains($response->getBody()->getContents(), '<form action="/login"')) {

// Re-authenticate

unlink('/tmp/scraper_session.json');

$client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => [

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD')

]

]);

$response = $client->request('GET', 'https://www.scrapingcourse.com/dashboard');

}Handling Redirects During Login

Some sites redirect after successful login (from /login to /dashboard or /home). Guzzle follows redirects automatically by default, but you can control this behavior.

<?php

// Track redirect chain

$response = $client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => [

'email' => getenv('SCRAPER_EMAIL'),

'password' => getenv('SCRAPER_PASSWORD'),

],

'allow_redirects' => [

'max' => 5,

'track_redirects' => true

]

]);

// See where login took you

$redirects = $response->getHeader('X-Guzzle-Redirect-History');

print_r($redirects);Disable redirects to capture the intermediate response:

$response = $client->request('POST', 'https://www.scrapingcourse.com/login', [

'form_params' => ['email' => getenv('SCRAPER_EMAIL'), 'password' => getenv('SCRAPER_PASSWORD')],

'allow_redirects' => false

]);

$statusCode = $response->getStatusCode(); // 302

$location = $response->getHeader('Location')[0]; // https://www.scrapingcourse.com/dashboardThis helps debug login flows or extract tokens from redirect URLs.

DOM Parsing and Data Extraction

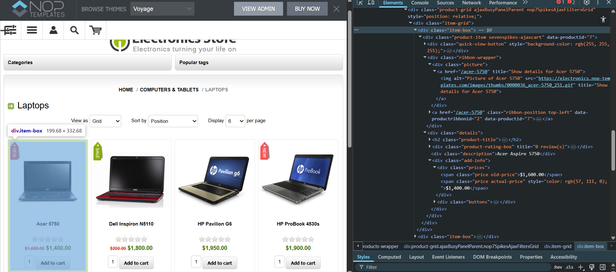

After fetching HTML, extract the data using CSS selectors or XPath. The target is a demo product catalog with nested elements and optional fields.

CSS Selectors vs XPath

Both approaches work for DOM traversal. CSS selectors offer readability, XPath provides more power and better performance.

CSS Selectors handle most scraping tasks with jQuery-like syntax. These examples use file_get_contents() for brevity, which requires allow_url_fopen=On in php.ini (enabled by default on most setups). For production scrapers, use Guzzle or cURL instead.

<?php

use Symfony\Component\DomCrawler\Crawler;

$html = file_get_contents('https://electronics.nop-templates.com/laptops');

$crawler = new Crawler($html);

// Get all product containers

$products = $crawler->filter('.product-item');

// Navigate nested elements

$titles = $crawler->filter('.product-item .product-title a');

// Attribute extraction

$images = $crawler->filter('.product-item img')->each(function (Crawler $node) {

return $node->attr('src');

});XPath handles complex relationships CSS can’t express and runs faster in Symfony DomCrawler.

<?php

// Same product containers

$products = $crawler->filterXPath('//div[@class="product-item"]');

// Get products with old price (on sale)

$saleProducts = $crawler->filterXPath('//div[@class="product-item"][.//span[@class="old-price"]]');

// Navigate to parent then sibling

$prices = $crawler->filterXPath('//span[@class="actual-price"]/..//span[@class="old-price"]');

// Position-based selection (skip first 3 products)

$remainingProducts = $crawler->filterXPath('//div[@class="product-item"][position() > 3]');When to use each:

| Task | Use CSS | Use XPath |

|---|---|---|

| Simple class/ID selection | + | + |

| Attribute contains value | + | + |

| Navigate to parent element | - | + |

| Position-based filtering | - | + |

| Text content matching | - | + |

| Complex boolean logic | - | + |

| Performance critical code | - | + |

Performance comparison shows XPath significantly faster in DomCrawler. Running 1,000 iterations of the same query:

- CSS Selectors: 3.865 seconds

- XPath: 0.564 seconds

- XPath is 585% faster in Symfony DomCrawler

Why? Symfony DomCrawler converts CSS selectors to XPath internally using the CssSelector component. This translation happens on every filter() call. Using XPath directly skips this conversion layer and queries libxml2 immediately.

For production scrapers processing thousands of pages, use XPath. For quick scripts where readability matters more than performance, CSS selectors work fine.

Extracting Product Data

The laptop catalog has products with varying structures. Some have old prices (sales), some don’t. Some products have ribbons (New, Sale), others don’t. Even when elements are expected to exist, it’s safer to validate them before accessing their values to avoid runtime errors.

<?php

require __DIR__ . '/vendor/autoload.php';

use Symfony\Component\DomCrawler\Crawler;

$html = file_get_contents('https://electronics.nop-templates.com/laptops');

$crawler = new Crawler($html);

$products = $crawler->filterXPath('//div[@class="product-item"]')->each(function (Crawler $node) {

// Title always exists (but still validated for safety)

$titleNode = $node->filterXPath('.//h2[@class="product-title"]/a');

$title = $titleNode->count() ? $titleNode->text() : 'N/A';

// Description might be empty

$description = $node->filterXPath('.//div[@class="description"]');

$descText = $description->count() ? trim($description->text()) : 'N/A';

// Actual price always present (but still validated)

$priceNode = $node->filterXPath('.//span[@class="price actual-price"]');

$actualPrice = $priceNode->count() ? $priceNode->text() : '0';

// Old price only on sale items

$oldPrice = $node->filterXPath('.//span[@class="price old-price"]');

$oldPriceText = $oldPrice->count() ? $oldPrice->text() : null;

// Product URL

$url = $titleNode->count() ? $titleNode->attr('href') : null;

// Image source

$imageNode = $node->filterXPath('.//div[@class="picture"]//img');

$image = $imageNode->count() ? $imageNode->attr('src') : null;

return [

'title' => $title,

'description' => $descText,

'price' => $actualPrice,

'old_price' => $oldPriceText,

'url' => $url ? 'https://electronics.nop-templates.com' . $url : null,

'image' => $image,

];

});

foreach ($products as $product) {

echo "Title: {$product['title']}\n";

echo "Price: {$product['price']}";

if ($product['old_price']) {

echo " (was {$product['old_price']})";

}

echo "\n";

echo "Description: {$product['description']}\n";

echo "URL: {$product['url']}\n";

echo str_repeat('-', 80) . "\n";

}Output from the test run:

Title: Acer 5750

Price: $1,400.00 (was $1,600.00)

Description: Acer Aspire 5750

URL: [https://electronics.nop-templates.com/acer-5750](https://electronics.nop-templates.com/acer-5750)

Title: Dell Inspiron N5110

Price: $1,800.00 (was $200.00)

Description: Dell Inspiron N5110 series

URL: [https://electronics.nop-templates.com/dell-inspiron-n5110](https://electronics.nop-templates.com/dell-inspiron-n5110)

Title: HP Pavilion G6

Price: $1,950.00

Description: HP Pavilion G6-1105SQ series

URL: [https://electronics.nop-templates.com/hp-pavilion-g6](https://electronics.nop-templates.com/hp-pavilion-g6)The count() check prevents crashes when elements don’t exist. Always validate before calling text() or attr() on optional fields.

Handling Broken HTML

In practice, HTML is messy. Unclosed tags, missing quotes, invalid nesting. DOMDocument handles most issues but throws warnings.

<?php

$html = '<div><p>Unclosed paragraph<div>Invalid nesting</p></div>';

$doc = new DOMDocument();

$doc->loadHTML($html);

// PHP Warning: DOMDocument::loadHTML(): Unexpected end tag : p in Entity, line: 1Suppress warnings with libxml_use_internal_errors().

<?php

$html = '<div><p>Unclosed paragraph<div>Invalid nesting</p></div>';

libxml_use_internal_errors(true);

$doc = new DOMDocument();

$doc->loadHTML($html);

$errors = libxml_get_errors();

libxml_clear_errors();

// Optionally log errors

foreach ($errors as $error) {

error_log("HTML Parse Error: {$error->message}");

}DOMDocument auto-corrects most HTML issues in recovery mode (enabled by default). It closes unclosed tags, fixes nesting, and adds missing elements.

<?php

$brokenHtml = '

<div class="product">

<h2>Product Name

<p>Description without closing tag

<span class="price">$19.99

</div>

';

libxml_use_internal_errors(true);

$doc = new DOMDocument();

$doc->loadHTML($brokenHtml);

$xpath = new DOMXPath($doc);

$price = $xpath->query('//span[@class="price"]')->item(0)->textContent;

echo $price; // $19.99For extremely broken HTML, use the HTML5 parser from Masterminds.

composer require masterminds/html5<?php

use Masterminds\HTML5;

$html5 = new HTML5();

$dom = $html5->loadHTML($brokenHtml);

$xpath = new DOMXPath($dom);

$elements = $xpath->query('//div[@class="product"]');The HTML5 parser follows WHATWG spec and handles modern HTML better than DOMDocument’s libxml2 parser.

Asynchronous Scraping Architecture

Scraping 100 product pages one by one takes time. Each request waits for the server to respond before moving to the next URL. The scraper sits idle while network packets travel back and forth.

Async scraping sends multiple requests at once. While waiting for one server to respond, the scraper fires off ten more requests. This turns a 5-minute job into a 30-second task.

Concurrent Requests with Guzzle Promises

Guzzle Promises send requests without blocking. The scraper fires all requests immediately and processes responses as they arrive.

<?php

require 'vendor/autoload.php';

use GuzzleHttp\Client;

use GuzzleHttp\Promise;

$client = new Client([

'verify' => false

// Security Warning: `'verify' => false` disables SSL certificate verification.

// Never use this in production, it exposes your scraper to man-in-the-middle attacks.

// It is used here only to simplify examples against test endpoints.

]);

$promises = [];

$urls = [

'https://electronics.nop-templates.com/laptops',

'https://electronics.nop-templates.com/desktops',

'https://electronics.nop-templates.com/monitors',

'https://electronics.nop-templates.com/tablets',

'https://electronics.nop-templates.com/notebooks',

'https://electronics.nop-templates.com/accessories-2'

];

foreach ($urls as $url) {

$promises[$url] = $client->requestAsync('GET', $url);

}

$start = microtime(true);

$results = Promise\Utils::unwrap($promises);

$elapsed = microtime(true) - $start;

echo "Time: {$elapsed}s\n";This sends all requests at once. The server receives a flood of connections, and responses come back in parallel. Time drops from 40 seconds to around 3-4 seconds (1.79 sec in our case).

But this approach has problems. Sending 100 simultaneous requests can overwhelm the target server or trigger rate limiting. The scraper also consumes significant memory holding all promises.

Controlled Concurrency with Pools

Guzzle Pool limits concurrent requests to a reasonable number.

<?php

require 'vendor/autoload.php';

use GuzzleHttp\Client;

use GuzzleHttp\Pool;

use GuzzleHttp\Psr7\Request;

$client = new Client([

'verify' => false // WARNING: DEV ONLY, remove in production

]);

$urls = [

'https://electronics.nop-templates.com/laptops',

'https://electronics.nop-templates.com/desktops',

'https://electronics.nop-templates.com/monitors',

'https://electronics.nop-templates.com/tablets',

'https://electronics.nop-templates.com/notebooks',

'https://electronics.nop-templates.com/accessories-2',

// ... more category URLs

];

$requests = function ($urls) {

foreach ($urls as $url) {

yield new Request('GET', $url);

}

};

$products = [];

$pool = new Pool($client, $requests($urls), [

'concurrency' => 10,

'fulfilled' => function ($response, $index) use (&$products, $urls) {

$html = $response->getBody()->getContents();

// Parse products

$crawler = new \Symfony\Component\DomCrawler\Crawler($html);

$count = $crawler->filterXPath('//div[@class="product-item"]')->count();

$products[$urls[$index]] = $count;

echo "Scraped {$urls[$index]}: {$count} products\n";

},

'rejected' => function ($reason, $index) use ($urls) {

echo "Failed {$urls[$index]}: {$reason}\n";

},

]);

$start = microtime(true);

$promise = $pool->promise();

$promise->wait();

$elapsed = microtime(true) - $start;

echo "\nTotal time: {$elapsed}s\n";

echo "Total products: " . array_sum($products) . "\n";With concurrency set to 10, the pool maintains 10 active requests at all times. When one finishes, the pool immediately starts the next queued request. This keeps the network saturated without overwhelming the server.

Real benchmark with 10 URLs:

- Synchronous: 4.1 seconds

- Asynchronous (concurrency 10): 1.25 seconds

- Speedup: 3.3x faster

The speedup scales with more URLs. For 100 pages, async can be 10-20x faster depending on server response times.

Memory Leak Prevention

Async scraping processes many pages quickly, which exposes memory management issues. A scraper that runs fine on 10 pages crashes on 1,000 due to memory leaks.

The problem comes from circular references in DOM objects. DomCrawler and DOMDocument create object graphs where parent nodes reference children and children reference parents. PHP’s garbage collector can break these cycles, but it relies on reference counting first.

Circular references keep reference counts above zero, so the collector only finds and frees them when its cycle detection phase runs, which happens automatically only when an internal root buffer fills up. In a high-volume scraping loop, cycles accumulate far faster than that threshold triggers.

<?php

$pool = new Pool($client, $requests($urls), [

'concurrency' => 50,

'fulfilled' => function ($response, $index) {

$html = $response->getBody()->getContents();

$crawler = new Crawler($html);

$products = $crawler->filterXPath('//div[@class="product-item"]');

// Process products...

// Memory leak: $crawler and DOM objects stay in memory

},

]);After processing 1,000 pages, memory usage climbs to several gigabytes. The script eventually crashes with “Allowed memory size exhausted.”

Solution 1: Unset variables explicitly

<?php

'fulfilled' => function ($response, $index) {

$html = $response->getBody()->getContents();

$crawler = new Crawler($html);

$products = $crawler->filterXPath('//div[@class="product-item"]')->each(function ($node) {

return [

'title' => $node->filterXPath('.//h2[@class="product-title"]/a')->text(),

'price' => $node->filterXPath('.//span[@class="actual-price"]')->text(),

];

});

// Save products...

unset($crawler, $html, $products);

},This helps but doesn’t fully solve the problem. The DOMDocument inside Crawler still holds references.

Solution 2: Trigger garbage collection

<?php

'fulfilled' => function ($response, $index) use (&$processedCount) {

$html = $response->getBody()->getContents();

$crawler = new Crawler($html);

// Process data...

unset($crawler, $html);

$processedCount++;

// Force GC every 100 pages

if ($processedCount % 100 === 0) {

gc_collect_cycles();

echo "Memory: " . round(memory_get_usage() / 1024 / 1024, 2) . " MB\n";

}

},gc_collect_cycles() forces PHP to break circular references and free memory. Call it periodically, not on every page (GC has overhead).

Solution 3: Process in batches

For very large scraping jobs (10,000+ pages), process URLs in batches and restart the script between batches.

<?php

$batchSize = 1000;

$offset = (int)($argv[1] ?? 0);

$urlBatch = array_slice($allUrls, $offset, $batchSize);

// Process batch...

echo "Processed URLs " . $offset . " to " . ($offset + $batchSize) . "\n";

echo "Next batch: php scraper.php " . ($offset + $batchSize) . "\n";Each batch starts with clean memory. This prevents any leaks from accumulating across the entire job.

Monitoring memory during scraping:

<?php

$pool = new Pool($client, $requests($urls), [

'concurrency' => 50,

'fulfilled' => function ($response, $index) use (&$startMemory) {

if (!isset($startMemory)) {

$startMemory = memory_get_usage();

}

$html = $response->getBody()->getContents();

$crawler = new Crawler($html);

// Process...

unset($crawler, $html);

$currentMemory = memory_get_usage();

$leaked = $currentMemory - $startMemory;

if ($leaked > 50 * 1024 * 1024) { // 50MB leaked

echo "Warning: Memory leak detected ({$leaked} bytes)\n";

gc_collect_cycles();

$startMemory = memory_get_usage();

}

},

]);This tracks memory growth and triggers GC when leaks exceed a threshold.

Dynamic Content and JavaScript Rendering

Single-page applications load content after the initial page renders. A standard HTTP client receives an empty shell. Headless browsers execute JavaScript and wait for the full DOM to build.

Headless Browsers with Symfony Panther

Panther controls Chrome or Firefox through WebDriver. It loads the page, executes JavaScript, and returns the rendered DOM.

| Use Panther when: | Skip Panther when: |

|---|---|

| Content loads via JavaScript after page render | Site works with Guzzle (check with a quick test) |

| Site uses React, Vue, Angular, or similar frameworks | API endpoints exist for the data (inspect Network tab) |

| Data appears only after user interactions (clicks, scrolls) | Static HTML contains all needed information |

| Anti-bot protection requires browser fingerprints | Scraping thousands of pages (headless browsers are slow) |

Run a test request with Guzzle first. If the HTML contains data, skip the browser. Headless scraping is 10-20x slower than HTTP clients.

composer require symfony/pantherBasic setup launches Chrome in headless mode.

<?php

use Symfony\Component\Panther\Client;

$client = Client::createChromeClient();

$crawler = $client->request('GET', 'https://example-spa.com');

// Chrome loads the page, executes JS, renders content

$titles = $crawler->filter('article h2')->each(function ($node) {

return $node->text();

});

$client->quit();The browser runs without a visible window. Memory usage is higher than Guzzle (Chrome needs 200-400MB per instance) but you get full JavaScript execution.

Waiting for Dynamic Content

JavaScript rendering takes time. Articles load via AJAX after page load. Use wait strategies to ensure content appears before extraction.

Explicit waits pause until a specific element exists.

<?php

$client->request('GET', 'https://medium.com');

// waitFor pauses until .article-list is in the DOM

$client->waitFor('.article-list', 10);

// Re-fetch the crawler AFTER the wait, not before

$crawler = $client->getCrawler();

$articles = $crawler->filter('.article-list article');Visibility waits ensure elements are not just in the DOM but actually visible.

<?php

// Wait for element to be visible (not display:none or hidden)

$client->waitForVisibility('.article-title', 10);

// Or wait for loading spinner to disappear

$client->waitForInvisibility('.loading-spinner', 10);Custom conditions handle complex scenarios.

<?php

// Wait until at least 5 articles have loaded

$client->wait(10)->until(function() use ($client) {

return $client->getCrawler()->filter('article')->count() >= 5;

});

// Refresh the crawler after the wait

$crawler = $client->getCrawler();Network idle waits pause until all AJAX requests complete.

<?php

use Spatie\Browsershot\Browsershot;

// Wait until network is idle for 500ms

$html = Browsershot::url('https://medium.com')

->waitUntilNetworkIdle()

->bodyHtml();Timeout errors happen when elements never appear. Always set realistic timeout values and handle failures.

<?php

try {

$client->waitFor('.articles', 10);

$crawler = $client->getCrawler();

$articles = $crawler->filter('.articles article');

} catch (\Exception $e) {

echo "Articles failed to load: " . $e->getMessage();

// Fallback or retry logic

}HasData API Integration

Headless browsers solve JavaScript rendering but add complexity. HasData API handles rendering, proxies, and anti-bot bypass in one request.

| When to use APIs: | When to use Panther: |

|---|---|

| Scraping sites with strong anti-bot protection (Cloudflare, PerimeterX) | Full control over browser behavior needed |

| No server resources for running Chrome instances | Scraping internal tools or sites without anti-bot |

| Need instant scaling without infrastructure setup | Budget constraints (API costs per request) |

| Need proxies, CAPTCHA solving and JS rendering | Complex interactions (multi-step forms, authenticated sessions) |

Minimal example with HasData web scraping API.

<?php

require 'vendor/autoload.php';

use GuzzleHttp\Client;

$client = new Client([

'verify' => false // WARNING: DEV ONLY, remove in production

]);

$apiKey = 'HASDATA-API-KEY';

$url = 'https://dev.to/';

$response = $client->request('POST', 'https://api.hasdata.com/scrape/web', [

'headers' => [

'Content-Type' => 'application/json',

'x-api-key' => $apiKey

],

'json' => [

'url' => $url,

'jsRendering' => true,

'proxyType' => 'datacenter',

'proxyCountry' => 'US'

]

]);

$result = json_decode($response->getBody(), true);

$html = $result['content'];

echo $html;The API executes JavaScript, rotates proxies, and returns rendered HTML. No ChromeDriver installation, no memory management, no browser crashes.

AI-powered extraction removes the need for CSS selectors. Define what you want in plain English.

<?php

require 'vendor/autoload.php';

use GuzzleHttp\Client;

$client = new Client([

'verify' => false, // WARNING: DEV ONLY, remove in production

'timeout' => 80,

'connect_timeout' => 15,

]);

$apiKey = 'HASDATA-API-KEY';

$response = $client->request('POST', 'https://api.hasdata.com/scrape/web', [

'headers' => [

'Content-Type' => 'application/json',

'x-api-key' => $apiKey

],

'json' => [

'url' => 'https://dev.to/',

'aiExtractRules' => [

'articles' => [

'type' => 'list',

'output' => [

'title' => [

'description' => 'article title',

'type' => 'string'

],

'author' => [

'type' => 'string'

],

'publishDate' => [

'type' => 'string'

]

]

]

]

]

]);

$result = json_decode($response->getBody(), true);

$articles = $result['aiResponse']['articles'];

foreach ($articles as $article) {

echo "Title: {$article['title']}\n";

echo "Author: {$article['author']}\n";

echo "Publish Date: {$article['publishDate']}\n\n";

}No XPath, no CSS selectors, no dealing with class name changes. The AI identifies the data and extracts it automatically.

Output formats include HTML, Markdown, JSON, or plain text.

Common Setup Issues

ChromeDriver not found. Panther downloads ChromeDriver automatically but can fail on restrictive networks. Download manually and specify the path.

$client = Client::createChromeClient('/path/to/chromedriver');Chrome profiles conflict (Windows). Multiple Chrome profiles cause “Could not start chrome” errors. Use —no-first-run and —no-default-browser-check flags.

$client = Client::createChromeClient(null, [

'--headless',

'--disable-gpu',

'--no-sandbox',

'--no-first-run',

'--no-default-browser-check'

]);Missing ChromeDriver. If Panther fails to download ChromeDriver automatically, install it manually.

composer require --dev dbrekelmans/bdiphp vendor/bin/bdi detect driversThis detects your Chrome version and downloads the matching driver.

Memory exhaustion. Each browser instance consumes 200-400MB. Limit concurrent browsers and close them properly.

$client = Client::createChromeClient();

// Scrape pages

$crawler = $client->request('GET', $url);

// Extract data...

// Always quit to free memory

$client->quit();For batch jobs, restart the browser every 50-100 pages to prevent memory leaks.

$urls = [...]; // 500 URLs

$batchSize = 50;

for ($i = 0; $i < count($urls); $i += $batchSize) {

$client = Client::createChromeClient();

$batch = array_slice($urls, $i, $batchSize);

foreach ($batch as $url) {

$crawler = $client->request('GET', $url);

// Extract data...

}

$client->quit(); // Free memory

gc_collect_cycles();

}Timeouts on slow sites. Increase default timeout for heavy pages.

$client = Client::createChromeClient(null, [

'--headless',

], [], [

'connection_timeout_in_ms' => 30000, // 30 seconds

'request_timeout_in_ms' => 60000 // 60 seconds

]);SSL certificate errors. Sites with self-signed certificates or expired SSL fail with verification errors.

// Quick fix for testing (NOT for production)

$client = new Client([

'verify' => false

]);For production, download the CA bundle and verify properly.

$client = new Client([

'verify' => '/path/to/cacert.pem'

]);Never disable SSL verification in production scrapers. Download the latest CA bundle from https://curl.se/docs/caextract.html

Bypassing Anti Bot Protections

Sites block scrapers through IP tracking, browser fingerprinting, and behavior analysis. Bypass these defenses with proxy rotation, realistic headers, and rate limiting.

Proxy Rotation

Rotating proxies prevents IP-based blocks. Build a simple rotator that cycles through a pool and handles failures.

<?php

class ProxyRotator

{

private array $proxies;

private int $currentIndex = 0;

private array $failedProxies = [];

public function __construct(array $proxies)

{

$this->proxies = $proxies;

}

public function getNext(): ?string

{

if (empty($this->proxies)) {

return null;

}

// Skip failed proxies

$attempts = 0;

while ($attempts < count($this->proxies)) {

$proxy = $this->proxies[$this->currentIndex];

$this->currentIndex = ($this->currentIndex + 1) % count($this->proxies);

if (!in_array($proxy, $this->failedProxies)) {

return $proxy;

}

$attempts++;

}

return null; // All proxies failed

}

public function markFailed(string $proxy): void

{

if (!in_array($proxy, $this->failedProxies)) {

$this->failedProxies[] = $proxy;

}

}

public function resetFailed(): void

{

$this->failedProxies = [];

}

}Use it with Guzzle requests.

<?php

use GuzzleHttp\Client;

$proxies = [

'http://proxy1:port1',

'http://proxy2:port2',

'http://proxy3:port3',

];

$rotator = new ProxyRotator($proxies);

$client = new Client();

foreach ($urls as $url) {

$proxy = $rotator->getNext();

if (!$proxy) {

echo "All proxies failed\n";

break;

}

try {

$response = $client->request('GET', $url, [

'proxy' => $proxy,

'timeout' => 10

]);

// Process response...

} catch (\Exception $e) {

$rotator->markFailed($proxy);

echo "Proxy {$proxy} failed: {$e->getMessage()}\n";

}

}Proxies fail due to bans, timeouts, or invalid credentials. Track failures and skip bad proxies automatically.

Header Spoofing

Default Guzzle headers look like a bot. Real browsers send specific header combinations.

<?php

// Bot-like request (gets blocked)

$response = $client->request('GET', $url);

// Browser-like request

$response = $client->request('GET', $url, [

'headers' => [

'User-Agent' => 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36',

'Accept' => 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8',

'Accept-Language' => 'en-US,en;q=0.9',

'Accept-Encoding' => 'gzip, deflate, br',

'Referer' => 'https://google.com/',

'DNT' => '1',

'Connection' => 'keep-alive',

'Upgrade-Insecure-Requests' => '1'

]

]);Rotate User-Agent strings to avoid pattern detection.

<?php

$userAgents = [

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36',

'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36',

'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36',

'Mozilla/5.0 (Linux; Android 10; K) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.7680.178 Mobile Safari/537.36'

];

$headers = [

'User-Agent' => $userAgents[array_rand($userAgents)],

'Accept' => 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language' => 'en-US,en;q=0.9',

];

$response = $client->request('GET', $url, ['headers' => $headers]);Set Referer to simulate navigation from search engines or social media.

$referers = [

'https://www.google.com/',

'https://www.bing.com/',

'https://twitter.com/',

'https://www.facebook.com/'

];

$headers['Referer'] = $referers[array_rand($referers)];Header order matters for advanced fingerprinting. Standard Guzzle sends headers alphabetically, which looks suspicious. Most sites ignore this, but some check.

Rate Limiting

Scraping too fast triggers rate limits. Add delays between requests and handle 429 responses gracefully.

<?php

$delayMs = 1000; // 1 second between requests

foreach ($urls as $url) {

$response = $client->request('GET', $url);

// Process response...

usleep($delayMs * 1000); // Convert ms to microseconds

}Implement exponential backoff for 429 (Too Many Requests) responses.

<?php

use GuzzleHttp\Client;

use Psr\Http\Message\ResponseInterface;

function fetchWithBackoff(Client $client, string $url, int $maxRetries = 5): ?ResponseInterface

{

$attempt = 0;

$baseDelay = 1000; // Start with 1 second

while ($attempt < $maxRetries) {

try {

$response = $client->request('GET', $url, ['http_errors' => false]);

if ($response->getStatusCode() === 429) {

$delay = $baseDelay * pow(2, $attempt); // Exponential: 1s, 2s, 4s, 8s, 16s

echo "Rate limited. Waiting {$delay}ms...\n";

usleep($delay * 1000);

$attempt++;

continue;

}

return $response;

} catch (\GuzzleHttp\Exception\ConnectException $e) {

// Network error (timeout, DNS failure) — retry

$attempt++;

$delay = $baseDelay * pow(2, $attempt);

echo "Connection failed, retrying in {$delay}ms: {$e->getMessage()}\n";

usleep($delay * 1000);

} catch (\Exception $e) {

echo "Request failed: {$e->getMessage()}\n";

return null;

}

}

echo "Max retries exceeded for {$url}\n";

return null;

}Per-domain rate limiting prevents hitting the same site too frequently.

<?php

class RateLimiter

{

private array $lastRequest = [];

private int $delayMs;

public function __construct(int $delayMs = 1000)

{

$this->delayMs = $delayMs;

}

public function wait(string $domain): void

{

if (!isset($this->lastRequest[$domain])) {

$this->lastRequest[$domain] = microtime(true);

return;

}

$elapsed = (microtime(true) - $this->lastRequest[$domain]) * 1000;

$remaining = $this->delayMs - $elapsed;

if ($remaining > 0) {

usleep($remaining * 1000);

}

$this->lastRequest[$domain] = microtime(true);

}

}

$limiter = new RateLimiter(2000); // 2 seconds per domain

foreach ($urls as $url) {

$domain = parse_url($url, PHP_URL_HOST);

$limiter->wait($domain);

$response = $client->request('GET', $url);

}Before scraping any site, check its robots.txt file and respect Crawl-delay directives. Spatie Crawler handles this automatically, but with Guzzle or cURL you need to parse it yourself. Fetch https://example.com/robots.txt, check if your target paths are allowed for your user agent, and add delays between requests. Ignoring robots.txt can get your IP blocked and, depending on the jurisdiction, create legal issues.

Conclusion

The patterns in this guide work for price monitoring, content aggregation, lead generation, and competitive analysis. Start with the simplest approach that works (Guzzle + DomCrawler) and add complexity only when needed.

Code examples and complete working scripts are on the Github. Check the GitHub repository for production-ready implementations of all patterns covered in this guide.

Questions or want to discuss scraping strategies? Join our Discord community.