Web scraping is the transfer of data posted on the Internet in the form of HTML pages (on a website) to some kind of storage (be it a text file or a database).

The advantage of C# programming language in web scraping is that it allows to integrate the browser directly into forms using the C# WebBrowser. The scraped data can be saved to any output file, or displayed on the screen.

Web Scraping Fundamentals in ASP Net Using C#

For the C# development environment, you can use Visual Studio or Visual Studio Code. The choice depends on the development goals and PC capabilities.

Visual Studio is an environment for full-fledged development of desktop, mobile and server applications with pre-built templates and the ability to graphically edit the program being developed.

Whereas Visual Studio Code is some basic shell on which one can install the required packages. It takes up much less space and CPU time.

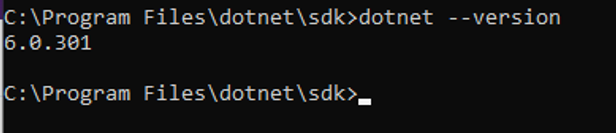

If one selects Visual Studio Code, he also have to install .NET Framework and .NET Core 3.1. To make sure that all components are installed correctly, at the command line, enter the command:

dotnet --versionIf everything works correctly, the command should return the version of .NET installed.

Instrumental for Web Scraping

To use web scraping in C# most effectively, it is worth using additional libraries. And before using libraries, you should know about every c# library for web scraping. The most used are PhantomJS with Selenium, HtmlAgilityPack with ScrapySharp and Puppeteer Sharp.

Get data with Selenium & PhantomJS Web Scraping C#

To use PhantomJS, the best c# web scraping library, you must install it. The easiest way to do this is via the NuGet Package Manager. To include a package in Visual Studio, right-click on the “References” tab in the project and type “PhantomJS” in the search bar.

What is the Selenium Library

However, when anyone speak about PhantomJS, he means PhantomJS with Selenium. To use PhantomJS with Selenium, you need to install the following packages additionally:

- Selenium.WebDriver.

- Selenium Support.

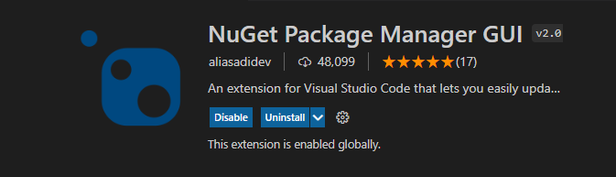

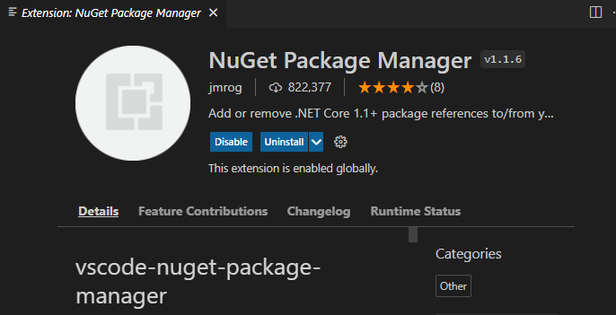

It can be done by using NuGet. To use NuGet in Visual Studio Code just install NuGet package manager with GUI:

Or not:

So, to get all titles on the page just use:

using (var driver = new PhantomJSDriver())

{

driver.Navigate().GoToUrl("http://example.com/");

var titles = driver.FindElements(By.ClassName("title"));

foreach (var title in titles)

{

Console.WriteLine(title.Text);

}

}This simple code will find all elements with class title and return all text it contains.

Selenium contains a lot of functions to find required element. An element can be searched by XPath, CSS Selector, or HTML tag. For example, to find any element by it’s XPath, like an input field, and pass in some value, just use:

driver.FindElement(By.XPath(@".//div")).SendKeys("c#");In order to click on any element, for example, the confirm button, one can use the following code:

driver.FindElement(By.XPath(@".//input[@id='searchsubmit']")).Click();What is the Selenium Library for

Selenium is a cross-platform library and works with most programming languages, has complete and well-written documentation, and an active community.

Using Selenium with PhantomJS is a good solution that allows to solve a wide range of scraping tasks, including dynamic page scraping. However, it is rather resource-intensive.

HtmlAgilityPack for Quick Start

If the site does not have protection against bots and all the necessary content is given immediately, then one can use a simple solution - use the Html Agility Pack library, another c# web scraping library. This is one of the most popular libraries for scraping in C#. It also connects via NuGet package.

What is the HtmlAgilityPac

This library builds a DOM tree from HTML. The problem is that one have to load the page code himself. To load the page just use the next code:

using (WebClient client = new WebClient())

{ string html = client.DownloadString("http://example.com");

//Do something with html then

}After that, to find some elements on the page, one can use, for example, XPath:

HtmlNodeCollection links = document.DocumentNode.SelectNodes(".//h2/a");

foreach (HtmlNode link in links)

Console.WriteLine("{0} - {1}", link.InnerText, link.GetAttributeValue("href", ""));What is the HtmlAgilityPac for

The HtmlAgilityPac is an easier option to start with and is well suited for beginners. It also has its own website with examples of use. It is simpler than Selenium and does not support some features, but it is well suited for not too complex projects.

Html Agility Pack allows you to embed the browser in windows form, creating a complete desktop application.

C# Web Scraping with ScrapySharp

To include a package in Visual Studio, right-click on the “References” tab in the project and type “ScrapySharp” in the search bar.

To add this package in Visual Studio Code write in command line:

dotnet add package ScrapySharpWhat is the ScrapySharp Library

ScrapySharp is an open-source web scraping library for C# programming language which has a NuGet package. Moreover ScrapySharp is an Html Agility Pack extension to scrape data structure using CSS selectors and supporting dynamic web pages.

To get HTML document using ScrapySharp one can use the following code:

static ScrapingBrowser browser = new ScrapingBrowser();

static HtmlNode GetHtml(string url){

WebPage webPage = browser.NavigateToPage(new Uri(url));

return webPage.Html;

}What is the ScrapySharp Library for

So, it is not as resource-intensive as Selenium, however, it also supports the ability to scrape dynamic web pages. However, if ScrapySharp is enough to solve everyday tasks, for more complex tasks it will be better to use Selenium.

Puppeteer for Headless Scraping

Puppeteer is a Node.js library which provides a high-level API to control headless Chrome or Chromium or to interact with the DevTools protocol. But it also has a wrapper for using in C# - Puppeteer Sharp, which has a NuGet package.

What is the Puppeteer Library

Puppeteer provides the ability to work with headless browsers and integrates into most applications.

Puppeteer has well-written documentation and usage examples on the official website. For example, the simplest application is:

using var browserFetcher = new BrowserFetcher();

await browserFetcher.DownloadAsync(BrowserFetcher.DefaultChromiumRevision);

var browser = await Puppeteer.LaunchAsync(new LaunchOptions

{

Headless = true

});

var page = await browser.NewPageAsync();

await page.GoToAsync("http://www.google.com");

await page.ScreenshotAsync(outputFile);What is the Puppeteer Library for

Puppeteer also supports headless mode, which allows you to reduce the CPU time and RAM consumed.

The Best C# Web Scraping Library

Depending on the project, its goals, and your capabilities, the best library for different cases will vary. Therefore, to simplify the selection process and assist you in finding the most suitable C# web scraping library, we have compiled a comparative table of all the libraries discussed today.

| Library | Description | Advantages | Disadvantages |

|---|---|---|---|

| PhantomJS | Integration of PhantomJS with Selenium for web scraping | Allows scraping of dynamic web pages | Resource-intensive, requires additional installation of PhantomJS |

| Selenium | Cross-platform library with extensive documentation and active community | Supports various element search methods (XPath, CSS Selector, HTML tag) | Can be slower compared to other libraries, may require more code to perform certain actions |

| HtmlAgilityPack | Builds a DOM tree from HTML and provides an easy-to-use interface | Suitable for simpler projects and beginners, no need for browser integration | Does not support certain advanced features provided by Selenium |

| ScrapySharp | Extension of HtmlAgilityPack with support for CSS selectors and dynamic web pages | Provides the ability to scrape dynamic web pages, less resource-intensive than Selenium | Limited documentation and community support, may not handle complex scenarios as effectively as Selenium |

| Puppeteer Sharp | C# wrapper for Puppeteer, which controls headless Chrome or Chromium and interacts with DevTools | Supports headless mode, well-documented, reduces CPU time and RAM consumption | Requires installation of a headless Chrome or Chromium browser, may have a learning curve for newcomers |

Now that you can see the differences in goals, advantages, and disadvantages of each, we hope that it will be easier for you to make a right choice.

C# Proxy for Web Scraping

Some sites have checks and traps to detect bots that will prevent the scraper from collecting a lot of data. However, there are also workarounds.

For example, you can use headless browsers to mimic the actions of a real user, increase the delay between iterations, or use a proxy.

Using proxy example:

public static void proxyConnect();

WebProxy proxy = new WebProxy();

proxy.address = “http://IP:Port”;

HTTPWebRequest req = (HTTPWebRequest);

WebRequest.Create(“https://example.com/”);

req.proxy = proxy;A proxy server is a must have for any C# web scraper. There are many options, such as SmartProxy services, Luminati Network, Blazing SEO. Free proxies are not always suitable for such purposes: they are often slow and unreliable. One can also create his own proxy network on the server, for example, using Scrapoxy, an open source API.

If one uses one proxy for too long time, he risks getting an IP ban or blacklist. To avoid blocking, one can use rotating residential proxies. By choosing a specific place to scrape and constantly changing the IP address, the scraper can become virtually immune to blocking IP addresses.

An alternative solution is to use our API within your scraper, which allows to collect the requested data and effectively avoid blocking without entering any captcha.

Conclusion and Takeaways

C# is a good option when it comes to creating a desktop scraper. There are fewer libraries for it than for NodeJS or Python, however, it is not worse them in terms of functionality. Moreover, if a highly secure parser is required, C# provides more options for implementation.

The considered libraries allow to create more complex projects that can require data, parse multiple pages and extract data. Of course, not all libraries were listed in the article, but only the most functional and with good documentation. However, in addition to those listed, there are other third-party libraries, not all of which have the NuGet package.