This article covers everything you need to scrape with Selenium in Python. No theory, just practical examples: from setting up the driver and browser options to managing sessions, handling dynamic content, and scaling scrapers. Tested on Selenium 4.36 (older versions may not work).

Setup and Management

Selenium 4.10+ includes Selenium Manager, which automatically finds and installs drivers, so you no longer need to install ChromeDriver or GeckoDriver manually.

Install Selenium

Install the latest Selenium (4.36, at the time of writing):

pip install seleniumDriver Initialization

Initialize the webdriver for your browser:

from selenium import webdriver

# Chrome

driver = webdriver.Chrome()

# Firefox

driver = webdriver.Firefox()

# Edge

driver = webdriver.Edge()

# Safari (only for MacOS)

driver = webdriver.Safari()

driver.quit()The result:

To use a specific driver binary, download it and specify its path:

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

service = Service("/path/to/chromedriver")

driver = webdriver.Chrome(service=service)In that case, Selenium will use the driver you pointed to instead of the built-in one.

Context Manager for Auto-Quit

A context manager automatically calls driver.quit() when the with block ends, even if an error occurs:

from selenium import webdriver

with webdriver.Chrome() as driver:

driver.get("https://example.com")

print(driver.title)When running multiple scrapers, this pattern prevents missed browser processes, forgotten driver.quit(), and memory leaks.

Browser Options

Control how Selenium launches the browser (headless, user-agent, resource blocking, and more) using the Options classes and Chrome DevTools Protocol (CDP).

Headless Mode

Since Chrome 109+ (2023), the recommended headless usage is:

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

options = Options()

options.add_argument("--headless=new")

driver = webdriver.Chrome(options=options)Headless mode saves CPU and RAM, and starts faster, because it doesn’t render the UI.

Window Size and User-Agent

When using a WebDriver, customize at least some headers, such as window size and User-Agent. By default, the browser opens at 800×600, which some sites may detect as suspicious.

When setting a custom size, keep it within the actual visible area (window.innerWidth/window.innerHeight) and avoid unrealistic dimensions (for example, 500×500 or 1000×2000).

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

options = Options()

options.add_argument("--window-size=1920,1020")

options.add_argument("user-agent=UserAgent/1.0")

driver = webdriver.Chrome(options=options)

# Check headers

driver.get("https://httpbin.org/headers")

print(driver.page_source)

driver.quit()We maintain a list of the latest User Agents on our blog.

Disable JS/Images (prefs/CDP)

--disable-images and --disable-javascript flags no longer work in modern versions of Chrome.

# These flags do NOT work in modern Chrome

options.add_argument("--disable-images")

options.add_argument("--disable-javascript")Chrome preferences let you configure profile options before launch, for example, enabling or disabling JavaScript, images, or notifications. This feature works only in Chrome and Chromium.

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

options = Options()

prefs = {

"profile.managed_default_content_settings.images": 2, # disable images

"profile.managed_default_content_settings.javascript": 2, # disable JS

}

options.add_experimental_option("prefs", prefs)

driver = webdriver.Chrome(options=options)For Firefox 120+ and Selenium 4.10+, Firefox preferences work the same way using set_preference().

from selenium import webdriver

from selenium.webdriver.firefox.options import Options

options = Options()

options.set_preference("permissions.default.image", 2) # disable images

options.set_preference("javascript.enabled", False) # disable JS

driver = webdriver.Firefox(options=options)Selenium 4+ integrates with the Chrome DevTools Protocol (CDP), providing access to low-level browser features like resource blocking, network control, and more.

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

options = Options()

driver = webdriver.Chrome(options=options)

# Enable Network domain for CDP

driver.execute_cdp_cmd("Network.enable", {})

# Block selected resource types

driver.execute_cdp_cmd("Network.setBlockedURLs", {"urls": ["*.png", "*.jpg", "*.css"]})This functionality was deprecated in Firefox and fully removed in Selenium 4.29.

Navigation

All navigation actions work within the active browser session.

`get`, `refresh`, `back`, `forward`

When scraping, you’ll use four basic actions: open, refresh, go back, and go forward:

from selenium import webdriver

driver = webdriver.Chrome()

driver.get("https://example.com") # Open a page

driver.refresh() # Reload

driver.back() # Go back

driver.forward() # Go forwardExample:

Page Properties: `title`, `current_url`, `page_source`

To verify the current page, use current_url and title. page_source returns the full HTML.

from selenium import webdriver

driver = webdriver.Chrome()

driver.get("https://example.com")

print(driver.title) # Page title

print(driver.current_url) # Current URL

print(driver.page_source) # HTML snippetUse page_source only when you need the full HTML, for example, to store it or pass it to an LLM. For targeted data extraction, use find_element() or find_elements().

Following Links (via `click` or `get`)

You can follow links in two ways: by clicking an element or by navigating directly with get().

driver.get("https://example.com")

# Click a link element

link = driver.find_element(By.LINK_TEXT, "More information...")

link.click()

# Follow link manually

url = link.get_attribute("href")

driver.get(url)Use click() when you need to emulate a real user or get() isn’t possible.

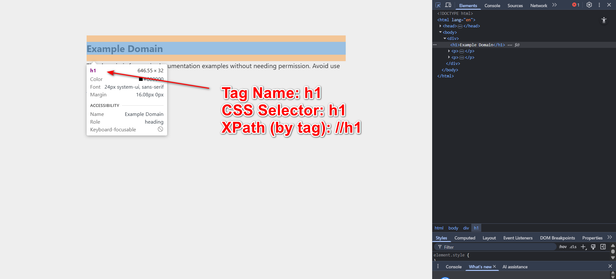

Locating Elements

Selenium 4.10+ uses the By locator API. The older find_element_by_* methods are deprecated. Import locators from selenium.webdriver.common.by:

from selenium import webdriver

from selenium.webdriver.common.by import ByLocators: `id`, `name`, `css`, `xpath`

Locate the element using any available method:

Basic locator examples:

# By Tag Name

element_tag = driver.find_element(By.TAG_NAME, "h1")

# By CSS Selector

element = driver.find_element(By.CSS_SELECTOR, "h1")

# By XPath

element = driver.find_element(By.XPATH, "//h1")Also available: ID, NAME, CLASS_NAME, LINK_TEXT, PARTIAL_LINK_TEXT. For web scraping with XPath in Selenium, see our dedicated article.

`find_element` vs `find_elements`

find_element() returns one element – or throws an error if none found. If multiple elements match, find_element() returns the first one. find_elements() returns a list – or yields an empty list, if nothing matches.

# Single element (raises NoSuchElementException if not found)

button = driver.find_element(By.CSS_SELECTOR, "button.submit")

# Multiple elements (returns a list, empty if not found)

links = driver.find_elements(By.TAG_NAME, "a")

print(f"Found {len(links)} links")Using DevTools and Shadow DOM

Shadow DOM isolates a component’s internals from the global document. Standard querySelector and other locators often can’t access elements inside a shadow root.

<custom-card>

#shadow-root

<div class="title">You can't scrape me</div>

</custom-card>For pages using Shadow DOM, use JavaScript to access shadow roots:

host = driver.find_element(By.CSS_SELECTOR, "custom-card")

shadow_root = driver.execute_script("return arguments[0].shadowRoot", host)

title = shadow_root.find_element(By.CSS_SELECTOR, ".title")Data Extraction

Selenium extracts visible text and attributes from the DOM via WebElement.

`element.text` Normalization

.text returns the text visible on the page, so it’s not always clean, containing extra spaces, newlines, non-breaking spaces, or invisible characters. Clean it before saving (strip, normalize whitespace, replace NBSP, etc.):

element = driver.find_element("css selector", "h1.title")

text = element.text.strip() # remove leading/trailing spaces

text = text.replace("\u00A0", " ") # replace non-breaking spaces`get_attribute` (href, src, value)

.get_attribute() returns internal HTML attributes (such as href, src, or value), not visible text.

# Links

link = driver.find_element("css selector", "a.download")

href = link.get_attribute("href")

# Images

image = driver.find_element("css selector", "img#logo")

src = image.get_attribute("src")Extract Lists and Tables

Use CSS selectors and .text to extract lists and tables.

# Extract a list

items = driver.find_elements("css selector", "ul#menu li")

menu = [item.text.strip() for item in items]

# Extract a table

rows = driver.find_elements("css selector", "table#data tr")

table_data = []

for row in rows:

cols = row.find_elements("tag name", "td")

table_data.append([col.text.strip() for col in cols])Export to CSV/JSON

Structure extracted data into variables that are easy to save. Then export to CSV or JSON.

import csv, json

# CSV

with open("data.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.writer(f)

writer.writerow(["Name", "Price"])

writer.writerows(table_data)

# JSON

with open("data.json", "w", encoding="utf-8") as f:

json.dump(table_data, f, ensure_ascii=False, indent=2)Waiting

Modern web pages often load elements dynamically. Interacting with them too early can cause NoSuchElementException or StaleElementReferenceException.

Implicit vs Explicit Waits

An implicit wait sets a global delay for all element searches.

driver.implicitly_wait(10) # wait up to 10sIt will delay every find_element(s) call, even when unnecessary:

element = driver.find_element(By.ID, "login")An explicit wait pauses execution until a specific condition is met. It’s more reliable than implicit waits.

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

driver = webdriver.Chrome()

driver.get("https://example.com")

wait = WebDriverWait(driver, 10)

# Common conditions:

wait.until(EC.presence_of_element_located((By.ID, "submit"))) # in DOM via ID

wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, ".item"))) # in DOM via CSS

wait.until(EC.visibility_of_element_located((By.ID, "submit"))) # visible via ID

wait.until(EC.visibility_of_element_located((By.NAME, "email"))) # visible via name

wait.until(EC.element_to_be_clickable((By.ID, "submit"))).click() # clickable

wait.until(EC.text_to_be_present_in_element((By.TAG_NAME, "h1"), "Welcome")) # text present

wait.until(EC.url_contains("dashboard")) # URL contains

driver.quit()If you forget to call .until() when using explicit waits, no waiting will occur.

Handling Stale Elements

A StaleElementReferenceException occurs when an element is removed or replaced in the DOM – for example, after AJAX updates, partial re-renders, or frame switches. Re-locate the element whenever the DOM changes:

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from selenium.common.exceptions import StaleElementReferenceException

driver = webdriver.Chrome()

driver.get("https://example.com")

wait = WebDriverWait(driver, 10)

old_element = driver.find_element(By.ID, "submit")

try:

# Wait until the element is refreshed (DOM replaced)

refreshed_element = wait.until(

EC.refreshed(EC.element_to_be_clickable((By.ID, "submit")))

)

refreshed_element.click()

except StaleElementReferenceException:

# Fallback — re-find manually if still stale

driver.find_element(By.ID, "submit").click()Interactions

Below, you’ll find the common interactions you’ll need when building a scraper.

`click`, JS-click Fallback

Use the standard Selenium click; and JS click – as a fallback for stubborn elements. Use the first option when possible.

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

driver = webdriver.Chrome()

driver.get("https://example.com")

# Standard click

button = WebDriverWait(driver, 10).until(

EC.element_to_be_clickable((By.ID, "submit-btn"))

)

button.click()

# JS fallback

driver.execute_script("arguments[0].click();", button)`send_keys`, Special Keys

Sometimes, it’s easier to send keys (like Enter) instead of clicking a button. Use keys to simplify the script when possible.

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

driver = webdriver.Chrome()

driver.get("https://www.google.com/")

input_field = driver.find_element(By.CSS_SELECTOR, "textarea[name='q']")

input_field.send_keys("HasData Selenium complete guide")

input_field.send_keys(Keys.RETURN) # press EnterForms, Checkboxes, Selects

Form filling is common when login is required before scraping.

from selenium.webdriver.support.ui import Select

# Fill a form

driver.find_element(By.NAME, "username").send_keys("user123")

driver.find_element(By.NAME, "password").send_keys("secret")

# Submit form

driver.find_element(By.ID, "login-form").submit()

# Checkbox / radio

driver.find_element(By.ID, "agree").click()

# Select dropdown

dropdown = Select(driver.find_element(By.ID, "options"))

dropdown.select_by_visible_text("Option 2")Auth is a big topic, see our dedicated article on handling authentication while scraping.

`execute_script` Snippets

Selenium lets custom JS execute on the page. Test the JS in the browser console before adding it to your script.

# Scroll an element into view

element = driver.find_element(By.ID, "bottom")

driver.execute_script("arguments[0].scrollIntoView();", element)

# Get computed style

color = driver.execute_script("return window.getComputedStyle(arguments[0]).color;", element)

print(color)Scrolling and Dynamic Content

For pages that load content on scroll (e.g. Google Maps), scroll before extracting data. See our article for full details on scrolling in Selenium.

`scrollIntoView`, Custom JS Scroll

Select the scrollable element and scroll to the end:

from selenium.webdriver.common.by import By

element = driver.find_element(By.CSS_SELECTOR, "#scroll-area")

driver.execute_script("arguments[0].scrollIntoView({behavior: 'smooth', block: 'center'});", element)Or scroll by a specific pixel value:

# Scroll down 500 pixels

driver.execute_script("window.scrollBy(0, 500);")Horizontal scroll works the same way (e.g. (500, 0)).

Infinite Scroll/Load-More Patterns

For infinite scrolling, use a loop with a clear exit condition to prevent the script from hanging:

import time

last_height = driver.execute_script("return document.body.scrollHeight")

while True:

driver.execute_script("window.scrollTo(0, document.body.scrollHeight);")

time.sleep(1) # wait for new content to load

new_height = driver.execute_script("return document.body.scrollHeight")

if new_height == last_height:

break # reached bottom

last_height = new_heightScrolling Inside Elements and Iframes

To scroll inside an iframe, switch to it first, scroll, then switch back to the main window:

# Switch to iframe first

iframe = driver.find_element("css selector", "#iframe-id")

driver.switch_to.frame(iframe)

# Scroll inside a scrollable element

scrollable = driver.find_element("css selector", ".scrollable")

driver.execute_script("arguments[0].scrollTop = arguments[0].scrollHeight;", scrollable)

# Switch back to main page

driver.switch_to.default_content()Always switch back to the main window after completing your actions.

Tabs and Alerts

Sites often open new tabs (target="_blank"). If your script doesn’t switch tabs, it will keep operating on the previous one.

Open/Switch Tabs and Windows

Here’s how you switch, close, and list windows/tabs:

# Get all open tabs

tabs = driver.window_handles # returns a list of window handles

# Switch to the new tab (usually the second one)

driver.switch_to.window(tabs[1])

# Close the new tab

driver.close()

# Switch back to the original tab

driver.switch_to.window(tabs[0])Closing a tab doesn’t automatically switch back – call switch_to.window() to return to the previous tab.

Handle Alerts and Dialogs

Handle alerts and modal dialogs to bypass pop-ups that block access to content, such as newsletter subscriptions, terms of service, or age confirmations.

# Trigger an alert

driver.find_element("id", "alertButton").click()

# Switch to the alert

alert = driver.switch_to.alert

print(alert.text) # show alert text

# Accept or dismiss

alert.accept() # click OK

# alert.dismiss() # click CancelAlways handle alerts in scripts that may trigger pop-ups. Otherwise, Selenium will throw an UnexpectedAlertPresentException.

Sessions and Authentication

If a site requires login, automate the process. It becomes essential when scaling scrapers.

Automate Logins (Form + Submit)

Basic login example:

driver.find_element(By.ID, "username").send_keys("my_user")

driver.find_element(By.ID, "password").send_keys("my_password")

driver.find_element(By.ID, "login-button").click()See our dedicated article on authentication for details.

Cookies: Save/Load/Export

Basic cookie operations: get, add, delete. Export and import cookies for reuse.

import json

from selenium import webdriver

from selenium.webdriver.common.by import By

# --- Save cookies ---

driver = webdriver.Chrome()

driver.get("https://example.com")

# assume logged in already

cookies = driver.get_cookies()

json.dump(cookies, open("cookies.json", "w"))

driver.quit()

# --- Load cookies ---

driver = webdriver.Chrome()

driver.get("https://example.com") # must open domain first

for cookie in json.load(open("cookies.json")):

driver.add_cookie(cookie)

driver.refresh()

driver.quit()There are helper libraries that simplify saving and loading cookies:

import pickle

# Save cookies

cookies = driver.get_cookies()

pickle.dump(cookies, open("cookies.pkl", "wb"))

# Load cookies

for c in pickle.load(open("cookies.pkl", "rb")):

driver.add_cookie(c)Preserve Sessions across Runs

Save and restore sessions using cookies. This works across sessions for the same domain.

import json

from selenium import webdriver

driver = webdriver.Chrome()

driver.get("https://example.com")

# after login

json.dump(driver.get_cookies(), open("cookies.json", "w"))

driver.quit()

# restore cookies

driver = webdriver.Chrome()

driver.get("https://example.com")

for cookie in json.load(open("cookies.json")):

driver.add_cookie(cookie)

driver.refresh()

driver.quit()A browser profile and user-data directory preserve cookies, local storage, cache, and session data.

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

options = Options()

options.add_argument("user-data-dir=./chrome-profile") # custom Chrome profile

driver = webdriver.Chrome(options=options)

driver.get("https://example.com")

print(driver.current_url) # session preserved

driver.quit()Continue working with an existing browser session:

from selenium.webdriver.remote.webdriver import WebDriver

from selenium import webdriver

# Original driver

driver1 = webdriver.Chrome()

session_id = driver1.session_id

executor_url = driver1.command_executor._url

# Later: attach to same session

driver2 = WebDriver(command_executor=executor_url, desired_capabilities={})

driver2.session_id = session_idLocal and session storages are useful for SPA apps (React/Vue/Angular) or token-based auth.

driver = webdriver.Chrome()

driver.get("https://example.com")

# Set a token in localStorage

driver.execute_script("localStorage.setItem('auth_token','12345')")

# Retrieve token

token = driver.execute_script("return localStorage.getItem('auth_token')")Files and Snapshots

To manage file downloads, configure browser preferences to define the download directory and disable prompts. Selenium can retrieve page HTML, screenshots, and image URLs, but downloading image files requires a separate HTTP client, such as Requests.

Trigger and Monitor Downloads

This method works for portals that deliver files through a “Download” button – for example, reports or statements:

from selenium import webdriver

options = webdriver.ChromeOptions()

options.add_experimental_option("prefs", {"download.default_directory": "/downloads"})

driver = webdriver.Chrome(options=options)

driver.get("https://example.com")

driver.find_element("id", "downloadButton").click()

driver.quit()Auto-Download Setup

Configure Selenium to download files without prompts – otherwise, a save dialog may block the script.

options = webdriver.ChromeOptions()

options.add_experimental_option("prefs", {

"download.default_directory": "/downloads", # folder for downloads

"download.prompt_for_download": False, # don't ask

"safebrowsing.enabled": True # bypass safe-browsing warning

})

driver = webdriver.Chrome(options=options)Save HTML/Screenshots

Save the page HTML and capture screenshots for debugging and archiving.

# Save HTML:

driver = webdriver.Chrome()

driver.get("https://example.com")

with open("page.html", "w", encoding="utf-8") as f:

f.write(driver.page_source)

# Save Screenshot:

driver = webdriver.Chrome()

driver.get("https://example.com")

driver.save_screenshot("screenshot.png")Download Images by `src`

Find <img> elements and read their src attributes. To download the files, use an HTTP client such as requests.

driver = webdriver.Chrome()

driver.get("https://example.com/ ")

images = driver.find_elements("tag name", "img")

for img in images:

print(img.get_attribute("src"))Some sites serve images via blob: or data: URIs. In that case, decode the Base64 payload or intercept network traffic – for example, using Selenium Wire – to retrieve the image files.

Debugging and Error Handling

Add screenshots, logs, and retries to stabilize scrapers.

Screenshots on Error

Don’t capture every page you scrape – save screenshots only when errors occur. It makes debugging faster.

try:

element = driver.find_element("id", "nonexistent")

except Exception as e:

driver.save_screenshot("error_screenshot.png")Logging Actions

Log errors along with the page context where they occurred. Full-trace logging is optional.

import logging

from selenium import webdriver

# Basic logging setup

logging.basicConfig(level=logging.INFO, format="%(asctime)s [%(levelname)s] %(message)s")

with webdriver.Chrome() as driver:

logging.info("Opening website...")

driver.get("https://example.com")

logging.info("Website loaded. Current URL: %s", driver.current_url)Common Exceptions and Retry Strategies

Some errors are transient. A reload and a short wait often helps. Here are some common exceptions:

| Exception | When it happens | How to handle it |

|---|---|---|

| NoSuchElementException | Element not found on the page | Use waits (WebDriverWait) before searching; retry or log the failure |

| StaleElementReferenceException | Element was on page but became stale (DOM updated) | Refetch the element or wrap in retry loop |

| TimeoutException | Explicit wait timed out | Increase wait, check selectors, or handle gracefully |

| ElementClickInterceptedException | Element is covered by another element (modal, sticky header) | Scroll into view, wait for overlay to disappear, or use JS click |

| ElementNotInteractableException | Element exists but cannot be interacted with | Wait for visibility, ensure element enabled, or use JS click |

| WebDriverException | Low-level driver/browser error | Can happen on crashes; retry or restart browser session |

| InvalidSelectorException | Bad CSS/XPath selector | Double-check selector syntax; often a typo |

| SessionNotCreatedException | Driver cannot start browser (version mismatch) | Update Selenium or browser, or use Selenium Manager to auto-handle |

Example:

from selenium import webdriver

from selenium.common.exceptions import NoSuchElementException, StaleElementReferenceException, TimeoutException

driver = webdriver.Chrome()

driver.get("https://example.com")

try:

el = driver.find_element("id", "dynamic-element")

print(el.text)

except NoSuchElementException:

print("Element not found!")

except StaleElementReferenceException:

print("Element became stale!")

except TimeoutException:

print("Operation timed out!")

driver.quit()Set a clear limit on retry attempts when implementing retries.

Network and Proxy

Sometimes, data is available via API endpoints or XHRs that are easier to parse. When scraping is required, network skills can help you make scrapers faster and more reliable.

CDP: Block Resources

Use CDP (execute_cdp_cmd) to block images, fonts, CSS, or URL patterns to reduce bandwidth and speed up scraping.

driver.execute_cdp_cmd("Network.enable", {})

driver.execute_cdp_cmd("Network.setBlockedURLs", {"urls": [

"*.jpg", "*.png", "*.gif", "*.woff", "*.css"

]})Selenium Wire: Inspect Requests

selenium-wire is a convenient wrapper that exposes traffic. It’s useful for debugging or extracting XHR/JSON responses.

pip install selenium-wireDefine a URL pattern – a unique substring – to capture the XHR requests that contain the data you need.

from seleniumwire import webdriver

import json

driver = webdriver.Chrome()

driver.get("https://example.com")

req = driver.wait_for_request("/api/", timeout=10) # wait for XHR

data = json.loads(req.response.body.decode("utf-8"))Proxy Config (Auth/Rotation)

Browsers handle proxy authentication through UI prompts or built-in mechanisms. Injecting credentials in URLs or command-line flags is often ignored or blocked.

# attempt to include credentials in the proxy URL (frequently ignored)

options.add_argument("--proxy-server=http://user:pass@host:port")To handle auth with creds in URL, use selenium-wire:

from seleniumwire import webdriver

opts = {

'proxy': {

'http': 'http://user:pass@host:port',

'https': 'https://user:pass@host:port'

}

}

driver = webdriver.Chrome(seleniumwire_options=opts)

driver.get("https://httpbin.org/ip")On our blog, you can learn more about what proxies are, where to find free or paid proxy providers, and how to use them with Python.

Bypassing Cloudflare and Bot Protections

Anti-bot defenses are among the most common challenges. There’s no universal fix – protections and bypasses evolve constantly.

If a site has weak bot protection, running SeleniumBase in undetectable (UC) mode can help. It’s a Selenium fork that aims to be less detectable.

pip install seleniumbaseExample:

from seleniumbase import SB

with SB(uc=True, headless=False) as sb:

url = "https://httpbin.org/headers"

sb.uc_open_with_reconnect(url, 3)

html = sb.get_page_source()You may want to learn more about CAPTCHA and Cloudflare 1020 bypass.

Scaling Strategies for Scrapers

Scale Selenium scrapers across multiple browsers or machines using Grid or Scrapy.

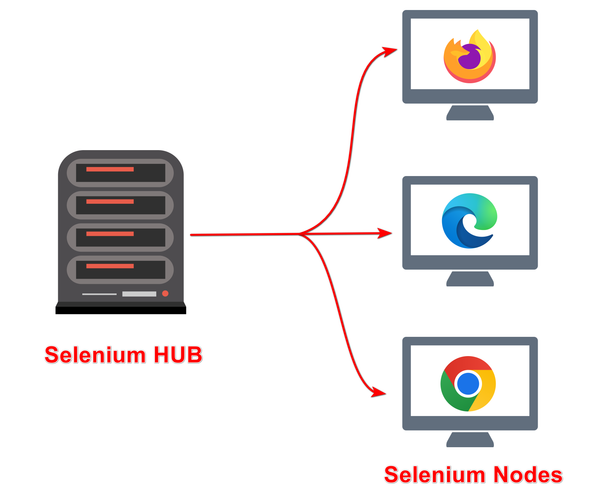

Selenium Grid/Multiple Instances

Use Selenium Grid to run scripts in parallel. It was built with Java but works with any client (including Python).

Download the Selenium server JAR and start a hub:

java -jar selenium-server-<version>.jar hubBy default, the hub is at http://localhost:4444/grid. Start a node:

java -jar selenium-server-<version>.jar node --hub http://localhost:4444Configure Python to use the remote hub:

from selenium import webdriver

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

grid_url = "http://localhost:4444/wd/hub"

driver1 = webdriver.Remote(command_executor=grid_url, desired_capabilities=DesiredCapabilities.CHROME)

driver2 = webdriver.Remote(command_executor=grid_url, desired_capabilities=DesiredCapabilities.CHROME)

driver1.get("https://example.com")

driver2.get("https://hasdata.com")driver1 and driver2 send jobs to the hub, which distributes them across nodes. If a node supports two browsers, both sessions run. If it only supports one, the second waits.

An alternative is Selenoid, a lighter and Docker-based solution, but support was discontinued last year.

Combine Selenium with Scrapy

Use scrapy-selenium to let Scrapy manage scaling while Selenium handles browser rendering.

pip install scrapy scrapy-seleniumHere’s how you add Selenium to a Scrapy spider:

# In your Scrapy spider

from scrapy_selenium import SeleniumRequest

class MySpider(scrapy.Spider):

name = 'my_spider'

def start_requests(self):

yield SeleniumRequest(url='https://example.com', callback=self.parse)

def parse(self, response):

# Your parsing logic hereUse HasData API

The easiest way to scale your scraper and bypass bot defenses is to use a scraping API. You need an API key to use it.

Here’s an example of an async script that limits concurrency per account and uses an LLM to extract site names and emails:

import asyncio

import aiohttp

import requests

api_key = "YOUR_API_KEY"

# Get available concurrency for your API key

def get_available_concurrency():

url = "https://api.hasdata.com/user/me/usage"

headers = {

"Content-Type": "application/json",

"x-api-key": api_key

}

response = requests.get(url, headers=headers)

if response.status_code == 200:

data = response.json()

# return available concurrency, fallback to 1

return data.get("data", {}).get("availableConcurrency", 1)

return 1

# Build payload for scraping a single URL

def make_payload(url):

return {

"url": url,

"proxyType": "datacenter",

"proxyCountry": "US",

"js_rendering": True,

"aiExtractRules": {

"companyName": {"description": "Company name", "type": "string"},

"email": {"description": "Email addresses", "type": "string"}

}

}

# Async function to scrape one site

async def scrape_site(session, url):

payload = make_payload(url)

async with session.post(

"https://api.hasdata.com/scrape/web",

headers={

"x-api-key": api_key,

"Content-Type": "application/json"

},

json=payload

) as response:

if response.status == 200:

data = await response.json()

ai_resp = data.get("aiResponse", {})

company = ai_resp.get("companyName", "-")

emails = ai_resp.get("email", "")

return {

"url": url,

"company": company,

"emails": emails

}

else:

return {"url": url, "error": response.status}

# Async scrape for multiple sites

async def scrape_all(urls):

concurrency = get_available_concurrency()

# limit concurrent connections to API's availableConcurrency

connector = aiohttp.TCPConnector(limit=concurrency)

async with aiohttp.ClientSession(connector=connector) as session:

tasks = [scrape_site(session, url) for url in urls]

results = await asyncio.gather(*tasks)

return results

# Run scraper

if __name__ == "__main__":

urls_to_scrape = [

"https://example.com",

"https://hasdata.com",

# add more URLs

]

all_results = asyncio.run(scrape_all(urls_to_scrape))

for r in all_results:

print(r)