To scrape Google News effectively, you need to choose the extraction method that matches your scale: RSS Feeds for lightweight monitoring, Google Search (tbm=nws) for specific keyword tracking, or a dedicated Google News API for high-volume collection that bypasses rate limits and CAPTCHAs.

Available Data Points

Primary metadata extractable from Google News articles includes:

- Core Data: Headlines, Summaries, Descriptions, Links.

- Timing: Publication Dates (UTC), Timestamps.

- Source: Publisher Name, Source URL.

- Media: Thumbnails, Images.

Setup and Prerequisites

Ensure you have a Python environment with the following libraries:

pip install requests feedparser pandas

# Optional: For sentiment analysis and visualization later

pip install nltk matplotlibExtracting Structured Data from Google News RSS Feed

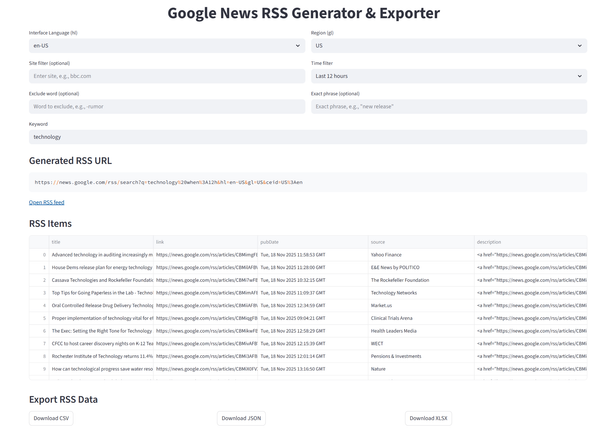

Google News RSS feeds utilize undocumented but predictable URL structures. This method is ideal for lightweight, real-time monitoring without heavy dependencies.

To get a feed, insert /rss into the standard URL path.

- Original:

https://news.google.com/topics/CAAqIAgKIhpDQkFTRFFvSEwyMHZNRzFyZWhJQ1pXNG9BQVAB- RSS Feed:

https://news.google.com/rss/topics/CAAqIAgKIhpDQkFTRFFvSEwyMHZNRzFyZWhJQ1pXNG9BQVABBe aware that XML feeds may occasionally lack complete metadata or encounter rate limits during high-volume fetching. Since URL structures change without notice, ensure your parser includes error handling.

Four main endpoints:

| Type | URL Format | Description |

|---|---|---|

| Top news | https://news.google.com/rss | Main news feed, optionally filtered by language and region |

| By topic | https://news.google.com/rss/topics/<TOPIC_ID> | News for a specific topic (requires topic ID) |

| By topic section | https://news.google.com/rss/topics/<TOPIC_ID>/sections/<SECTION_ID> | News for a specific section within a topic (requires section ID) |

| Search | https://news.google.com/rss/search?q=<QUERY> | RSS feed for custom keyword search, supports modifiers like site:, when: |

Search feed parameters:

| Parameter | Example | Description |

|---|---|---|

| q | q=site:bbc.com when:1d | Search query for keywords, phrases, site filters, or time modifiers |

| hl | hl=en-US | Interface language (controls localization of results) |

| gl | gl=US | Geographical region (country code for results) |

| ceid | ceid=US:en | Country and language code for content feed, usually matches hl and gl |

Modifiers:

| Modifier | Example | Description |

|---|---|---|

| site: | site:bbc.com | Limit search to a specific website or domain |

| when: | when:1d | Restrict results to a time range (1h, 12h, 1d, 7d) |

| -word | -rumor | Exclude a word from results |

| ”phrase" | "new release” | Exact match for a phrase |

| OR | apple OR samsung | Logical OR between terms |

To scrape Google News via RSS:

- Create a script to generate the RSS URL with the desired parameters.

- Parse the XML from the feed. It’s best to use a specialized library like feedparser for handling RSS feeds.

Here’s a small Python example to generate a search-based RSS feed URL:

import urllib.parse

# Base parameters

base_url = "https://news.google.com/rss/search"

hl = "en-US"

gl = "US"

ceid = "US:en"

# Search modifiers

keyword = "tech"

site_filter = "site:bbc.com"

time_filter = "when:1d" # when:1h, when:12h, when:1d, when:7d

exclude_word = "-rumor"

exact_phrase = '"new release"'

query_parts = [

keyword,

site_filter,

time_filter,

exclude_word,

exact_phrase

]

query = " ".join(part for part in query_parts if part)

encoded_query = urllib.parse.quote(query)

encoded_ceid = urllib.parse.quote(ceid)

rss_url = f"{base_url}?q={encoded_query}&hl={hl}&gl={gl}&ceid={encoded_ceid}"

print(rss_url)Parse the RSS feed and extract structured data:

import feedparser

feed = feedparser.parse(rss_url)

items = []

for entry in feed.entries:

items.append({

"title": entry.get("title", ""),

"link": entry.get("link", ""),

"pubDate": entry.get("published", ""),

"source": entry.get("source", {}).get("title", "") if entry.get("source") else "",

"description": entry.get("description", "")

})A working Streamlit wrapper is publicly available for practical use and can be adapted for custom projects:

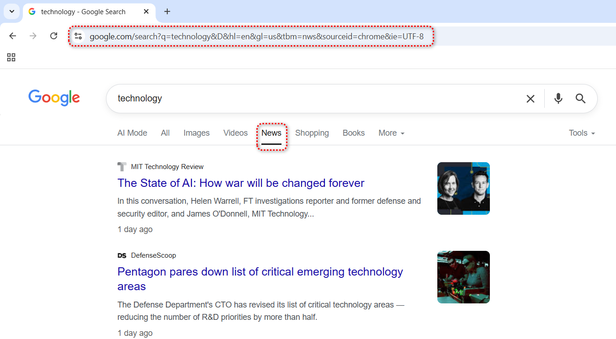

Scraping News Results from Google Search (Using tbm=nws)

The tbm=nws parameter filters standard Google Search results to show only news items. This method is essential for collecting historical data or specific keyword trends that RSS feeds miss.

Three main approaches:

- Headless Browsers (Selenium/Playwright): Flexible but resource-heavy. Requires constant maintenance to evade bot detection.

- LLM + Headless: Abstracts selector handling (e.g., Crawl4AI) but scales poorly due to cost and latency.

- SERP APIs: The enterprise standard. APIs like HasData handle IP rotation, CAPTCHAs, and HTML parsing server-side.

For production environments requiring high availability and IP rotation, we recommend dedicated scraping APIs over headless browsers to eliminate maintenance overhead.

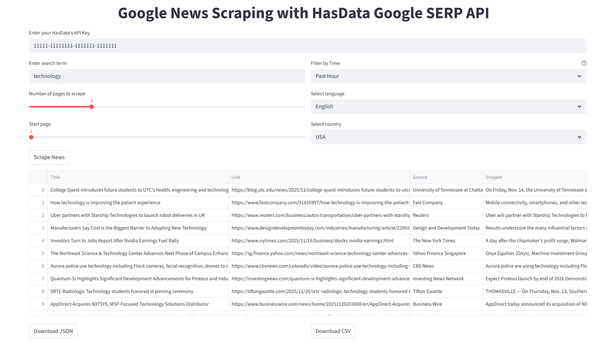

This script uses HasData’s Google SERP API to bypass blocking and extract structured CSV/JSON data immediately. Retrieve your API Key from the HasData dashboard.

import requests

import json

import csv

# Set up HasData’s Google SERP API endpoint and query parameters

url = "https://api.hasdata.com/scrape/google/serp"

params = {

"q": "technology", # search keyword

"location": "Austin,Texas,United States", # geolocation for search

"tbm": "nws", # news search mode

"deviceType": "desktop", # emulate desktop browser

}

# API headers with your HasData API key

headers = {

"Content-Type": "application/json",

"x-api-key": "HASDATA-API-KEY"

}

# Send request and parse JSON response

response = requests.get(url, params=params, headers=headers)

data = response.json()

news_items = []

# Extract relevant fields from each news result

for item in data.get("newsResults", []):

news_items.append({

"position": item.get("position"),

"title": item.get("title"),

"link": item.get("link"),

"source": item.get("source"),

"snippet": item.get("snippet"),

"date": item.get("date"),

"thumbnail": item.get("thumbnail")

})

# Save results to JSON

with open("news.json", "w", encoding="utf-8") as f:

json.dump(news_items, f, ensure_ascii=False, indent=2)

# Define CSV fields

csv_fields = ["position", "title", "link", "source", "snippet", "date", "thumbnail"]

# Save results to CSV

with open("news.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=csv_fields)

writer.writeheader()

writer.writerows(news_items)Google limits results to 10 per page. To paginate through results, iterate requests by incrementing the start parameter (e.g., start=10, start=20) in your API requests.

The full code and a live Streamlit demo are available, so you can try it instantly or fork the project and adapt it to your own workflow.

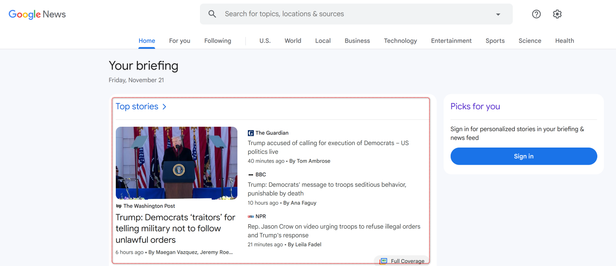

Direct Google News API (Topics & Trends)

Direct scraping via the Google News API allows for precise filtering by topic, section, or location without the parsing overhead of generic search results.

1. Scraping “Top Stories”

The “Top Stories” section is the default feed displayed on the Google News homepage.

To collect data from this specific feed using HasData’s API, you must provide its unique identifier − the topicToken.

import requests

import json

import pandas as pd

API_KEY = "HASDATA-API-KEY"

# Google News API endpoint and topicToken for specific section

url = "https://api.hasdata.com/scrape/google/news"

params = {

"topicToken": "CAAqIggKIhxDQkFTRHdvSkwyMHZNRGxqTjNjd0VnSmxiaWdBUAE" # U.S. news example

}

# Headers including API key

headers = {

"Content-Type": "application/json",

"x-api-key": API_KEY

}

# Request data from API

response = requests.get(url, params=params, headers=headers)

if response.status_code == 200:

data = response.json()

# Save raw JSON response

with open("news.json", "w", encoding="utf-8") as f:

json.dump(data, f, indent=2, ensure_ascii=False)

news = data.get("newsResults", [])

clean_rows = []

# Extract and structure relevant fields for each news item

for item in news:

h = item.get("highlight", {})

source = h.get("source", {})

row = {

"position": item.get("position"),

"title": h.get("title"),

"link": h.get("link"),

"date": h.get("date"),

"thumbnail": h.get("thumbnail"),

"thumbnailSmall": h.get("thumbnailSmall"),

"source_name": source.get("name"),

"source_icon": source.get("icon"),

"source_authors": ", ".join(source.get("authors", [])),

"stories": json.dumps(item.get("stories", []), ensure_ascii=False)

}

clean_rows.append(row)

# Convert structured data to CSV

df = pd.DataFrame(clean_rows)

df.to_csv("news.csv", index=False)The API also supports keyword search, country, language, sort order, and other parameters listed in the official documentation.

2. Scraping Specific Topics

To target niche verticals (e.g., Technology, Business), replace the topicToken in the script above.

Common Topic Tokens:

| Category (Title) | topicToken |

|---|---|

| Top Stories | CAAqJggKIiBDQkFTRWdvSUwyMHZNRFZxYUdjU0FtVnVHZ0pWVXlnQVAB |

| U.S. | CAAqIggKIhxDQkFTRHdvSkwyMHZNRGxqTjNjd0VnSmxiaWdBUAE |

| World | CAAqJggKIiBDQkFTRWdvSUwyMHZNRGx1YlY4U0FtVnVHZ0pWVXlnQVAB |

| Local | CAAqHAgKIhZDQklTQ2pvSWJHOWpZV3hmZGpJb0FBUAE |

| Business | CAAqJggKIiBDQkFTRWdvSUwyMHZNRGx6TVdZU0FtVnVHZ0pWVXlnQVAB |

| Technology | CAAqJggKIiBDQkFTRWdvSUwyMHZNRGRqTVhZU0FtVnVHZ0pWVXlnQVAB |

| Entertainment | CAAqJggKIiBDQkFTRWdvSUwyMHZNREpxYW5RU0FtVnVHZ0pWVXlnQVAB |

| Sports | CAAqJggKIiBDQkFTRWdvSUwyMHZNRFp1ZEdvU0FtVnVHZ0pWVXlnQVAB |

| Science | CAAqJggKIiBDQkFTRWdvSUwyMHZNRFp0Y1RjU0FtVnVHZ0pWVXlnQVAB |

| Health | CAAqIQgKIhtDQkFTRGdvSUwyMHZNR3QwTlRFU0FtVnVLQUFQAQ |

A complete example with all available parameters allows dynamic topic selection via a dropdown or custom value:

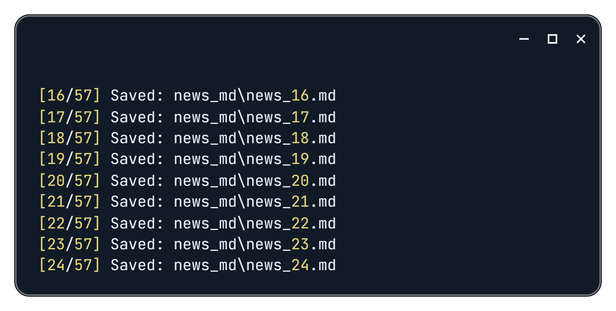

3. Scraping Full Article Content

The Google News API provides links, but not the full article text. To build a complete dataset, you must pass the extracted links to a Web Scraping API that handles Javascript rendering and content extraction.

Workflow:

- Fetch Links: Use the News API to get a list of URLs.

- Crawl Content: Pass URLs to the Web Scraping API with outputFormat:

["markdown"]. - Store: Save individual files for RAG pipelines or analysis.

import requests

import json

import os

import time

API_KEY = "HASDATA-API-KEY"

# Prepare parameters for Google News API request

params_raw = {

"q": "", # optional search query

"gl": "us", # geographic location

"hl": "en", # language

"topicToken": "CAAqJggKIiBDQkFTRWdvSUwyMHZNRFZxYUdjU0FtVnVHZ0pWVXlnQVAB",

"sectionToken": "",

"publicationToken": "",

"storyToken": "",

"so": ""

}

# Remove empty parameters

params = {k: v for k, v in params_raw.items() if v}

news_url = "https://api.hasdata.com/scrape/google/news"

news_headers = {"Content-Type": "application/json", "x-api-key": API_KEY}

# Fetch news metadata from Google News API

resp = requests.get(news_url, params=params, headers=news_headers)

resp.raise_for_status()

data = resp.json()

# Extract links to full articles

news_links = [item.get("highlight", {}).get("link") for item in data.get("newsResults", []) if item.get("highlight", {}).get("link")]

web_url = "https://api.hasdata.com/scrape/web"

web_headers = {"Content-Type": "application/json", "x-api-key": API_KEY}

# Create folder to save markdown files

os.makedirs("news_md", exist_ok=True)

# Loop through each news link and scrape full article in Markdown

for idx, link in enumerate(news_links, start=1):

payload = {

"url": link,

"proxyType": "datacenter", # choose proxy type

"proxyCountry": "US", # set proxy country

"jsRendering": True, # enable JS rendering

"outputFormat": ["markdown"] # get content as Markdown

}

try:

resp = requests.post(web_url, headers=web_headers, data=json.dumps(payload))

resp.raise_for_status()

md_content = resp.text

filename = os.path.join("news_md", f"news_{idx}.md")

with open(filename, "w", encoding="utf-8") as f:

f.write(md_content)

print(f"[{idx}/{len(news_links)}] Saved: {filename}")

except Exception as e:

print(f"[{idx}/{len(news_links)}] Error: {link} -> {e}")

print("All news saved in 'news_md' folder")Track progress when working:

Practical Applications: From Data to Insights

Raw data is valuable only when transformed into insights. Below are two Python pipelines to convert scraping results into actionable intelligence: Trend Detection and Sentiment Monitoring.

Pipeline 1: Topic Frequency Analysis

This script identifies dominant narratives by tokenizing headlines, filtering out noise (stop words), and visualizing the top keywords.

Instead of hardcoding stop words, we use nltk.corpus for a robust, standardized list.

import requests

import json

from collections import Counter

import matplotlib.pyplot as plt

import nltk

from nltk.corpus import stopwords

import re

import string

nltk.download('stopwords')

API_KEY = "HASDATA-API-KEY"

params_raw = {

"q": "",

"gl": "us",

"hl": "en",

"topicToken": "CAAqJggKIiBDQkFTRWdvSUwyMHZNRFp0Y1RjU0FtVnVHZ0pWVXlnQVAB",

"sectionToken": "",

"publicationToken": "",

"storyToken": "",

"so": ""

}

params = {k: v for k, v in params_raw.items() if v}

news_url = "https://api.hasdata.com/scrape/google/news"

news_headers = {"Content-Type": "application/json", "x-api-key": API_KEY}

resp = requests.get(news_url, params=params, headers=news_headers)

resp.raise_for_status()

data = resp.json()

# Extract titles

titles = [

item.get("highlight", {}).get("title", "")

for item in data.get("newsResults", [])

]

# Prepare stopwords

stop_words = set(stopwords.words('english'))

# Collect words

words = []

for title in titles:

for word in re.findall(r'\w+', title.lower()):

if (

word not in stop_words

and len(word) > 2

and word not in string.punctuation

):

words.append(word)

# Count frequency

counter = Counter(words)

most_common = counter.most_common(20)

# Handle empty case

if not most_common:

print("No meaningful words.")

else:

labels, counts = zip(*most_common)

plt.figure(figsize=(12, 6))

plt.bar(labels, counts, color='skyblue')

plt.xticks(rotation=45, ha='right')

plt.title("Top 20 meaningful words in news headlines")

plt.ylabel("Frequency")

plt.tight_layout()

plt.show()For deeper insight, upgrade from single words (unigrams) to bigrams (e.g., “Artificial Intelligence” instead of “Artificial” and “Intelligence”).

Pipeline 2: Sentiment Analysis with VADER

VADER (Valence Aware Dictionary and sEntiment Reasoner) is a rule-based model specifically optimized for social media and short headlines. It requires less computational power than LLMs while remaining effective for directional sentiment (Positive/Negative)

Logic:

- Compound > 0.05: Positive

- Compound < -0.05: Negative

- Else: Neutral

Short text may produce false positives/negatives, especially for headlines with numbers or factual statements without emotional tone.

import requests

import json

from nltk.sentiment.vader import SentimentIntensityAnalyzer

import matplotlib.pyplot as plt

import nltk

# Download VADER lexicon for sentiment analysis

nltk.download('vader_lexicon')

API_KEY = "HASDATA-API-KEY"

# Parameters for Google News API request

params_raw = {

"q": "", # optional search query

"gl": "us", # geographic location

"hl": "en", # language

"topicToken": "CAAqJggKIiBDQkFTRWdvSUwyMHZNREpxYW5RU0FtVnVHZ0pWVXlnQVAB",

"sectionToken": "",

"publicationToken": "",

"storyToken": "",

"so": ""

}

# Remove empty parameters

params = {k: v for k, v in params_raw.items() if v}

news_url = "https://api.hasdata.com/scrape/google/news"

news_headers = {"Content-Type": "application/json", "x-api-key": API_KEY}

# Fetch news data

resp = requests.get(news_url, params=params, headers=news_headers)

resp.raise_for_status()

data = resp.json()

# Initialize VADER sentiment analyzer

sid = SentimentIntensityAnalyzer()

# Prepare containers for sentiment groups

grouped_news = {"positive": [], "neutral": [], "negative": []}

# Analyze sentiment for each news item

for item in data.get("newsResults", []):

highlight = item.get("highlight", {})

text_to_analyze = highlight.get("title", "") + " " + highlight.get("snippet", "")

score = sid.polarity_scores(text_to_analyze)

news_item = {

"title": highlight.get("title"),

"link": highlight.get("link"),

"snippet": highlight.get("snippet"),

"source_name": highlight.get("source", {}).get("name"),

"date": highlight.get("date"),

"thumbnail": highlight.get("thumbnail")

}

# Classify news item based on compound score

if score['compound'] >= 0.05:

grouped_news["positive"].append(news_item)

elif score['compound'] <= -0.05:

grouped_news["negative"].append(news_item)

else:

grouped_news["neutral"].append(news_item)

# Save sentiment-classified news to JSON

with open("news_sentiment.json", "w", encoding="utf-8") as f:

json.dump(grouped_news, f, ensure_ascii=False, indent=2)VADER excels at emotional tone but may struggle with financial nuance. A headline like “Profits fell by 20%” might be rated neutral if the dictionary lacks context for specific economic terms. For high-precision financial sentiment, consider fine-tuning a BERT model.

Legal & Ethical Compliance

When scraping news data, understanding the distinction between data access and content usage is critical for compliance.

- Public Facts vs. Copyrighted Work: generally, metadata (headlines, timestamps, factual snippets) is considered public information and is safe to index for analytics. However, the full body text of an article is the intellectual property of the publisher.

- Permissible Use:

- ✅ Analytics & AI: Using full text internally to train sentiment models, summaries, or trend dashboards is typically considered fair use in many jurisdictions.

- ❌ Republishing: Displaying full articles on your own public website without a license is a copyright violation.

- The API Advantage: Direct scraping can inadvertently trigger anti-bot measures or violate server resource policies. By using HasData, you offload the responsibility of polite crawling (rate limiting, headers management) to our infrastructure, ensuring that data is gathered without disrupting publisher ecosystems.

Disclaimer: This guide is for informational purposes only and does not constitute legal advice. Data scrapping laws (like GDPR in Europe or CFAA in the US) vary by region. Always consult with legal counsel regarding your specific use case.

Final Thoughts

Collecting and analyzing news from Google News opens a wide range of possibilities, from tracking trends to monitoring brand mentions or building custom news dashboards. Each approach has its strengths and limitations:

- RSS feeds are structured and reliable but offer limited flexibility;

- scraping Google Search results can provide broader coverage but requires constant maintenance to handle changes, proxies, and captchas;

- direct Google News scraping using API delivers clean, structured data with minimal overhead.

If you need to collect large volumes of news or bypass complex blocks, try HasData Google News API, we deliver the data in a structured format ready for analysis and integration into your workflow.