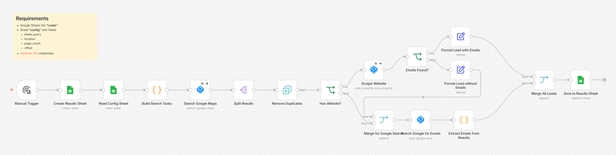

Build with us an n8n workflow that scrapes Google Maps for businesses, hits their websites looking for email addresses, and when websites come up empty (which happens a lot), runs targeted Google searches to find emails from business directories, LinkedIn, press releases, anywhere the company’s been mentioned.

You can wake up to 500 fresh HVAC contractor leads with actual contact info even without manual work.

All you need is:

- n8n (I’m using self-hosted, but n8n.io works)

- HasData account

- Google Sheets

- About 30 minutes to set this up

You can copy this flow here.

Why I Built It This Way

Most lead scrapers get the basics like name, address, phone. Cool, but useless if I can’t email them.

Company websites love hiding emails behind contact forms. So my workflow tries the website first, but when that fails (and it fails a lot), it pivots to Google search with a specific query pattern that finds the email from external sources. Hit rate went from ~30% to ~75%.

HasData was non-negotiable here because I tried building this with basic HTTP requests first. Got IP-banned within an hour. HasData handles all the proxy rotation and anti-blocking, so the workflow just runs.

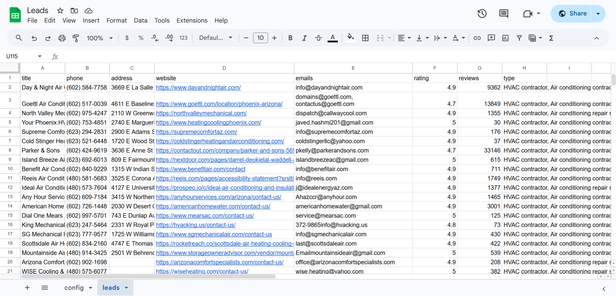

I ran a test yesterday with 5 Google Maps queries. Here’s the results:

- Total Google Maps requests: 5

- Total businesses scraped: 77

- Duplicates removed: 2

- Businesses with websites: 70

- Businesses without websites: 5

- Emails found on websites: 6 (8.6% of sites with websites)

- Websites with no emails: 64 (91.4%)

- Sent to Google search: 69 (64 failed websites + 5 without websites)

- Emails found via Google search: 46 (66.7% hit rate)

- No emails found anywhere: 23

Total email coverage: 52 out of 75 unique businesses = 69.3% hit rate.

Without the Google search fallback, I’d have 6 emails instead of 52. The website scraping alone has less than 9% success rate. Google search is doing the heavy part here, finding 46 emails that weren’t on company websites.

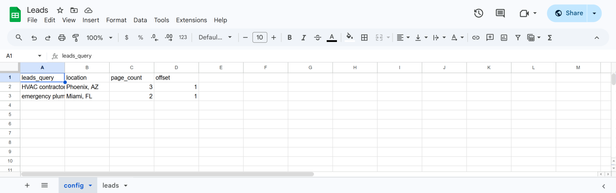

Config Sheet Setup

Before touching n8n, I created a Google Sheet with a “config” tab where I queue up searches.

leads_query is what I’m hunting for. location is the geographic target, can be a city, state, zip code, or a specific address. page_count controls how many pages to scrape (about 20 results per page). offset is the starting page, leave at 1 unless resuming a previous run.

This setup means I can run 10 different searches in one execution. Queue it Friday night, wake up to leads Monday.

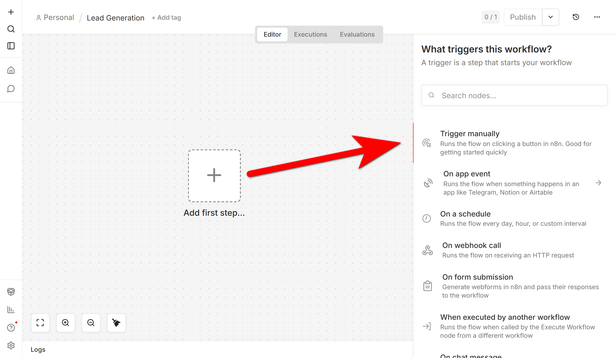

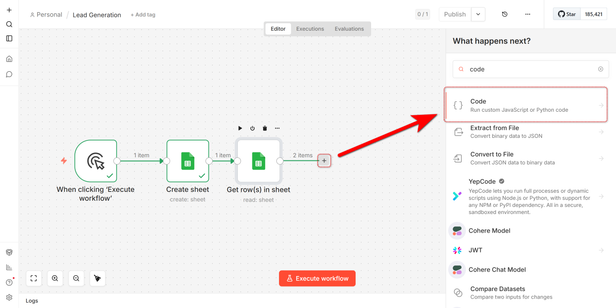

Manual Trigger and Timestamped Sheet

I start with a Manual Trigger node, though I’ve since switched this to a cron schedule.

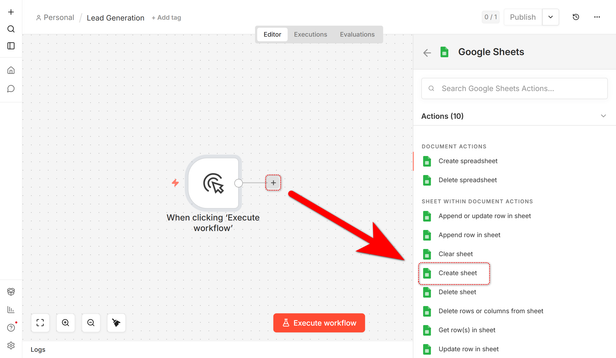

Next node is Google Sheets set to “Create” operation. This creates a new sheet with the current timestamp as the name (2026-04-24 14:30). Every run gets its own sheet, makes it easy to compare performance across different searches or track degradation over time.

Document: Select from the List

Title (use Expression): {{ $now.toFormat("yyyy-MM-dd HH:mm") }}

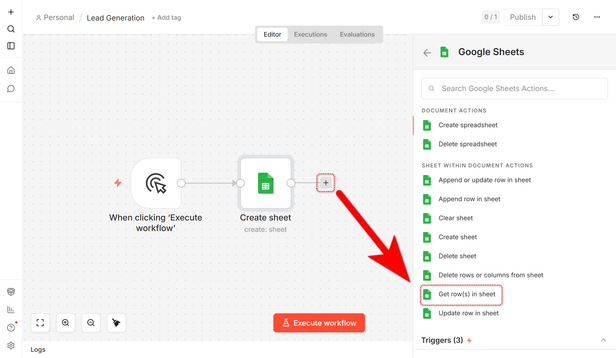

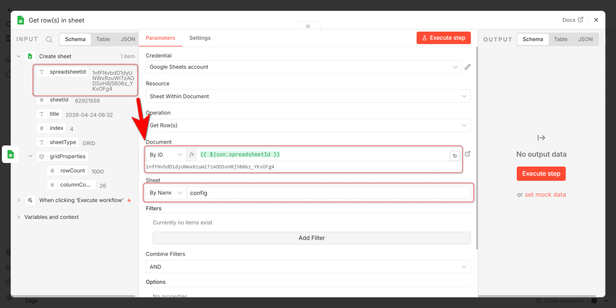

Reading Search Configs

Google Sheets node set to “Get Rows” pulls every row from my config sheet. If I’ve got 5 searches queued, I get 5 items flowing into the next node.

Set SpreadsheetId from the previous step and put the name of sheet with configuration.

Document (By ID): {{ $json.spreadsheetId }}

Pagination Logic

Google Maps returns 20 results per page. If I want 100 results, that’s 5 pages. HasData API uses a numeric offset (0, 20, 40…), not page numbers (1, 2, 3…).

I wrote a Code node (JavaScript) to process the conversion.

The code:

// 1. Initialize an array to store all tasks

let allTasks = [];

// 2. Constants

const RESULTS_PER_PAGE = 20;

// 3. Loop through Google Sheets rows

for (const item of $input.all()) {

const config = item.json;

// Validation: basic check for the query

if (!config.leads_query) continue;

const leads_query = config.leads_query;

const location = config.location || "";

const pageCount = parseInt(config.page_count) || 1;

// Get the starting page number from the table (default to 1 if empty)

const startPage = parseInt(config.offset) || 1;

// Generate a task for each requested page

for (let p = 0; p < pageCount; p++) {

const currentPageNumber = startPage + p;

// Convert Page Number to API Position (Offset)

// Page 1 -> (1-1) * 20 = 0

// Page 2 -> (2-1) * 20 = 20

const apiPosition = (currentPageNumber - 1) * RESULTS_PER_PAGE;

allTasks.push({

json: {

leads_query: leads_query,

ll: "",

location: location,

pageOffset: apiPosition, // This goes to the API

pageNumber: currentPageNumber, // Optional: for your own tracking

original_row: config.row_number || null

}

});

}

}

// 4. Return the tasks

return allTasks;If my config says page_count: 3, this generates 3 separate tasks with offsets 0, 20, 40. Each task hits the HasData API once.

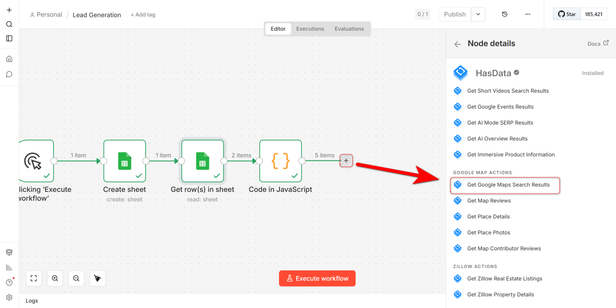

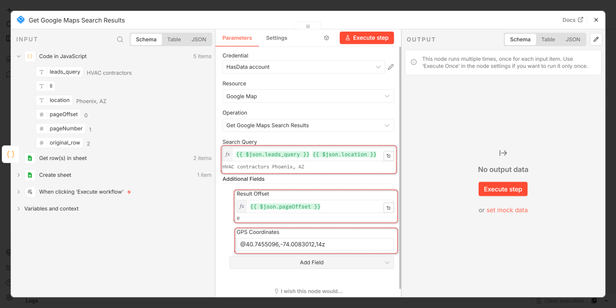

Scraping Google Maps

HasData node configured for Google Maps.

Settings:

Resource: Google Maps

Query: {{ $json.leads_query }} {{ $json.location }}

Additional Fields:

- Result Offset: {{ $json.pageOffset }}

- GPS Coordinates (required if you use offset): @40.7455096,-74.0083012,14z

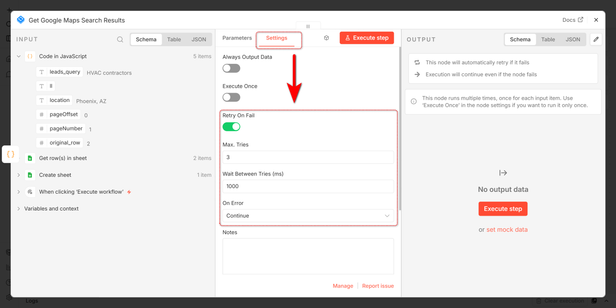

I enabled Retry on Fail and Continue on Error because sometimes HasData hits rate limits or a page comes back empty. No reason to kill the whole workflow over one bad page.

Results come back with company name, full address, phone number, website URL, rating/reviews.

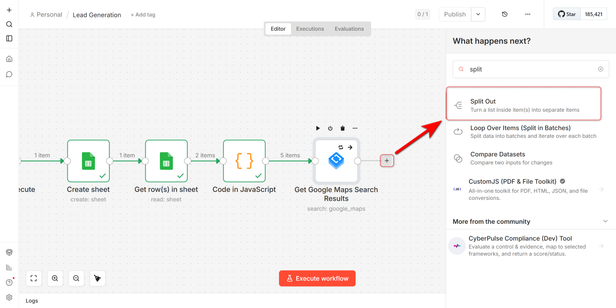

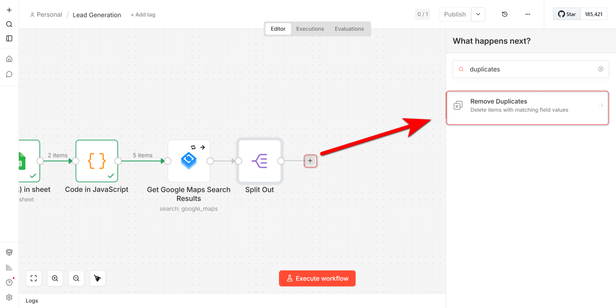

Splitting and Deduping

Results come as a single item with an array. Split Out node fixes that.

Field to Split Out: localResults

Now each business is its own item.

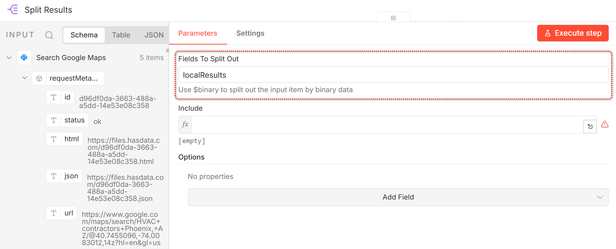

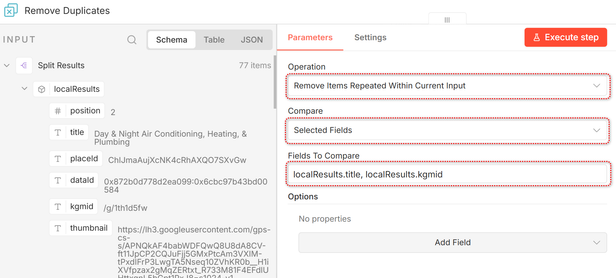

Remove Duplicates node catches the same business showing up in multiple searches.

Select Remove Items Repeated Within Current Input.

Compare: Selected Fields

Fields: localResults.title, localResults.kgmid

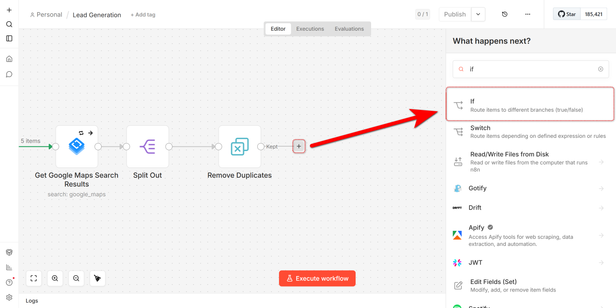

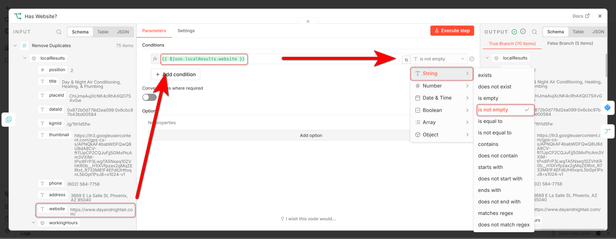

The Fork

IF node splits the flow based on whether the company has a website.

Condition: {{ $json.localResults.website }} is not empty

TRUE branch goes to website scraping. FALSE branch skips straight to Google search.

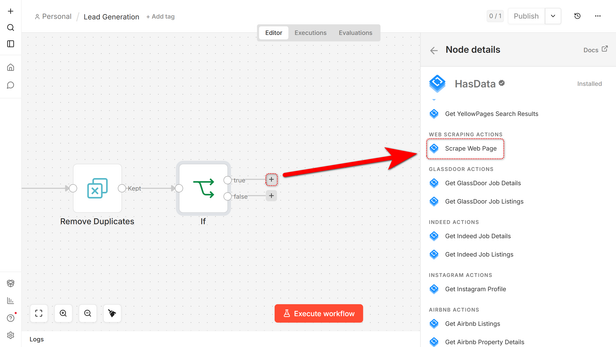

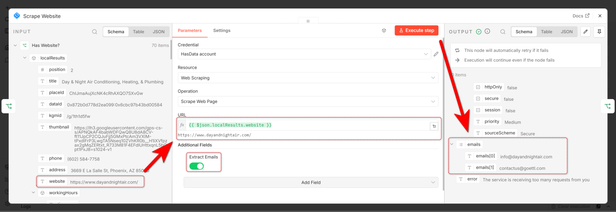

Scraping Websites for Emails

For the TRUE branch, HasData node set to web scraping.

Resource: Web Scraping

URL: {{ $json.localResults.website }}

Extract Emails: True

HasData loads the page and pulls out anything matching an email pattern. It scrapes all available emails from the site.

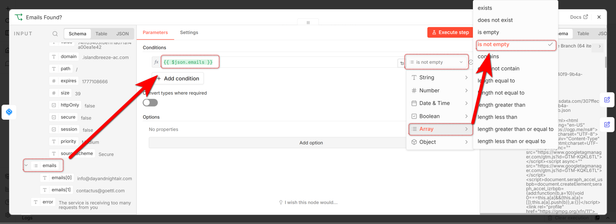

Did We Get Emails?

Another IF node checks if the scrape worked.

Condition: {{ $json.emails }} is not empty (Array)

TRUE branch means we got emails, format and export. FALSE branch means no emails found, try Google search.

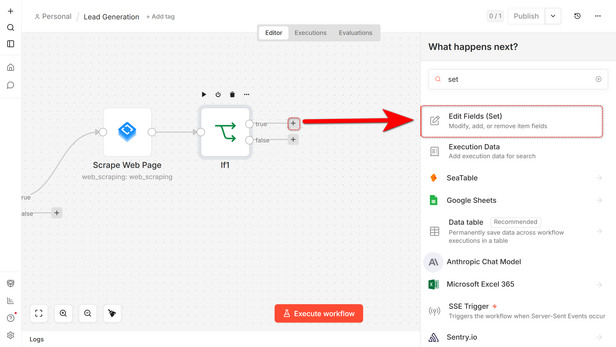

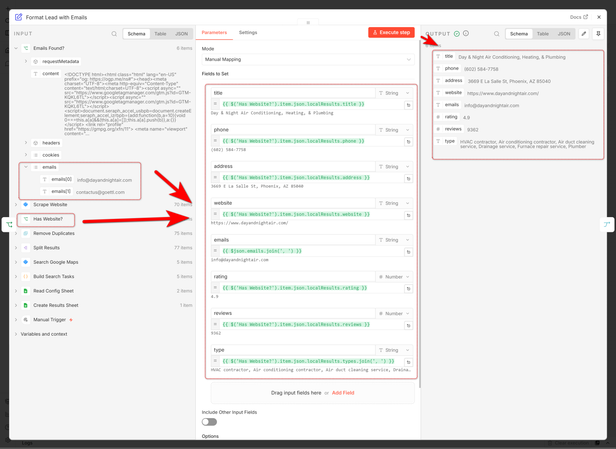

Formatting Results with Emails

For companies where I got emails, Edit Fields node.

Settings:

- title:

{{ $('If').item.json.localResults.title }} - phone:

{{ $('If').item.json.localResults.phone }} - address:

{{ $('If').item.json.localResults.address }} - website:

{{ $('If').item.json.localResults.website }} - emails:

{{ $json.emails.join(', ') }} - rating:

{{ $('If').item.json.localResults.rating }} - reviews:

{{ $('If').item.json.localResults.reviews }} - type:

{{ $('If').item.json.localResults.types.join(', ') }}

I renamed my first If node to Has Website?, so I used this name in my flow:

The .join(', ') turns the email array into a clean comma-separated string for Sheets.

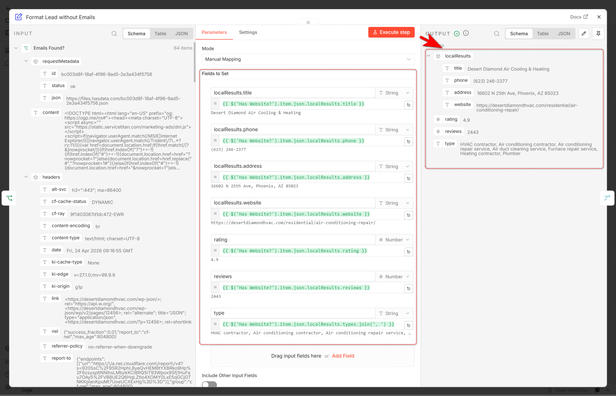

Google Search Fallback

Edit Fields node for companies without emails:

Settings:

- title:

{{ $('If').item.json.localResults.title }} - phone:

{{ $('If').item.json.localResults.phone }} - address:

{{ $('If').item.json.localResults.address }} - website:

{{ $('If').item.json.localResults.website }} - rating:

{{ $('If').item.json.localResults.rating }} - reviews:

{{ $('If').item.json.localResults.reviews }} - type:

{{ $('If').item.json.localResults.types.join(', ') }}

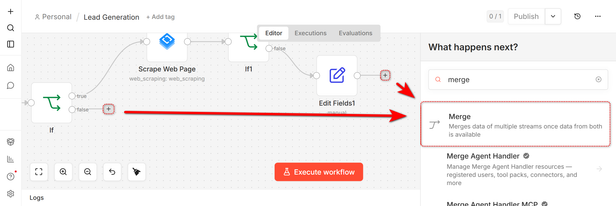

For companies where website scraping failed, I Merge them with the companies that had no website.

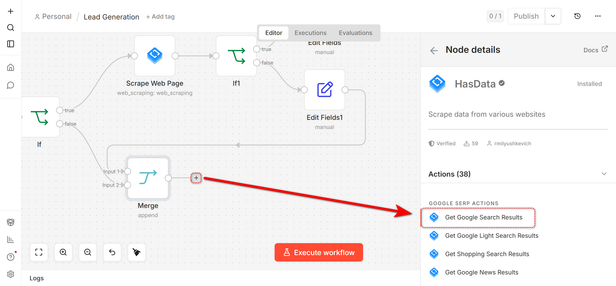

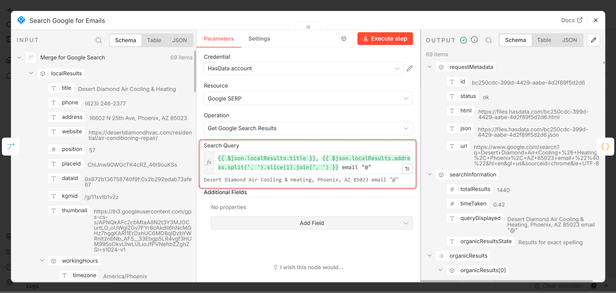

Then hit HasData for a Google search.

Resource: HasData Get Google Search Results

Query:

{{ $json.localResults.title }}, {{ $json.localResults.address.split(', ').slice(1).join(', ') }} email "@"

This query is Company Name, City, State email @. The email "@" forces Google to return pages containing email addresses. It finds listings from business directories like Yelp and BBB, LinkedIn company pages, press releases, old forum posts.

I’m not searching the company website again, I’m searching the entire web for mentions of this company plus email addresses.

This version included the street number in the Google query. Found emails for the neighbors instead. The .slice(1) strips the street number, keeping only city/state.

Extracting Emails from Search Results

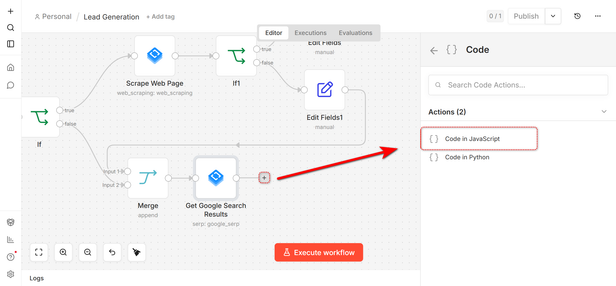

Code node (JavaScript) to parse the Google results.

Code:

/**

* Optimized lead processor for n8n.

* Features: Ancestor matching for Merge1 data and advanced email cleanup.

*/

let finalResults = [];

// Iterate through items coming from the previous node

for (let i = 0; i < $input.all().length; i++) {

const item = $input.all()[i];

const data = item.json;

const results = data.organicResults || [];

/**

* Safe data retrieval from Merge for Google Search.

* Using itemMatching(i) ensures we sync correctly with previous workflow steps.

*/

let sourceData = {};

let fullData = {};

try {

const ancestor = $("Merge for Google Search").itemMatching(i).json;

sourceData = ancestor.localResults || ancestor;

fullData = ancestor;

} catch (e) {

const fallback = $("Merge for Google Search").all()[i]?.json;

sourceData = fallback?.localResults || fallback || {};

fullData = fallback;

}

let foundData = {

website: null,

emails: null,

title: sourceData.title || null,

phone: sourceData.phone || null,

address: sourceData.address || null,

rating: fullData.rating || sourceData.rating || null,

reviews: fullData.reviews || sourceData.reviews || null,

type: fullData.type || sourceData.type || null

};

/**

* REFINED EMAIL REGEX

* [a-z]{2,6} - Look for lowercase extensions only.

* (?![A-Z]) - Negative lookahead: stop if a Capital letter follows (like .Read).

*/

const emailRegex = /[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-z]{2,6}(?![A-Z])/;

for (const res of results) {

const textToScan = (res.snippet || "") + " " + (res.title || "");

const match = textToScan.match(emailRegex);

if (match) {

let cleanedEmail = match[0];

/**

* MANUAL ARTIFACT REMOVAL

* Cleans up cases where snippet text is glued to the email.

*/

const artifacts = [".Read", ".View", ".More", ".Check", ".Full", ".Website"];

for (const art of artifacts) {

if (cleanedEmail.endsWith(art)) {

cleanedEmail = cleanedEmail.slice(0, -art.length);

}

}

// Final trim for any trailing dots

cleanedEmail = cleanedEmail.replace(/\.+$/, "");

foundData.emails = cleanedEmail;

foundData.website = res.link;

// Stop at the first valid email found in the results list

break;

}

}

finalResults.push({ json: foundData });

}

return finalResults;This checks both the title and snippet of each search result. Using a Set prevents duplicates.

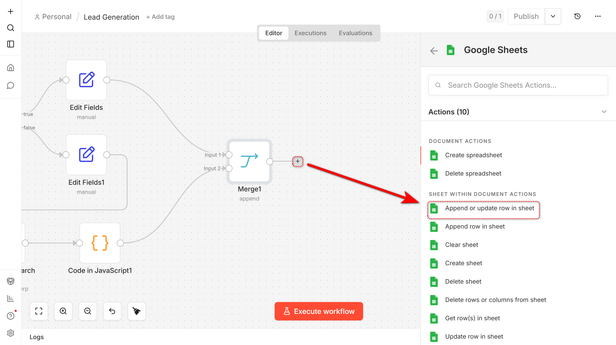

Final Merge and Export

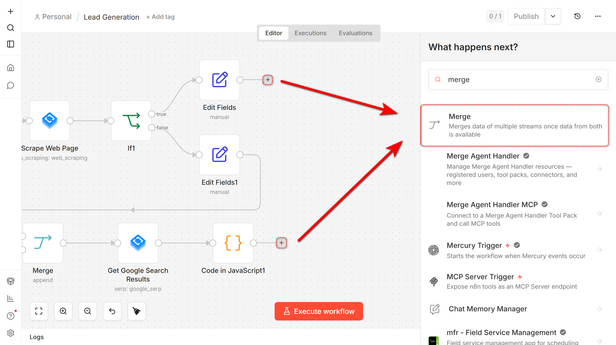

Merge node combines companies with emails from websites and companies with emails from Google search.

Then Google Sheets Append Row writes everything to the timestamped sheet I created at the start.

Settings:

- Operation:

Append or Update - Sheet (By ID):

{{ $('Create Sheet').item.json.sheetId }} - Columns:

Auto-map all fields

Then start a ready workflow and get a sheet full of leads with actual email addresses.

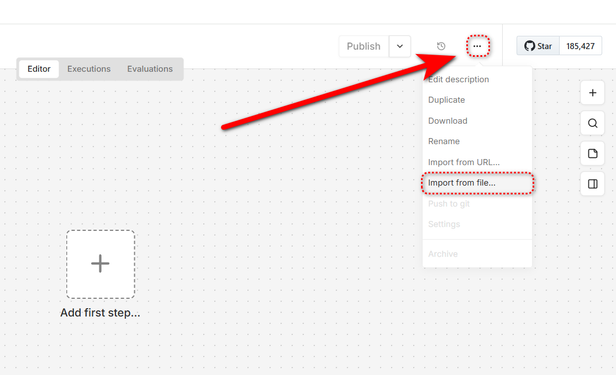

How to Use This

Clone the workflow JSON I’m sharing and import it to you empty workflow.

Set up HasData and get your API key, connect it in n8n. Create a config sheet with your first search. Run it once with page_count: 1 to test. Check results, tweak your query if needed. Scale up to page_count: 3-5 for production runs.

Optimization Tips

Be specific with your leads_query to get higher quality results. “emergency plumbing services” works better than “plumbers”.

Start with page_count: 1 for testing. Increase gradually as you validate results.

Consider adding nodes to validate email formats, remove generic emails, or enrich data with additional fields.

Use n8n’s cron trigger to run this workflow automatically. Daily for fresh leads, weekly for less competitive niches, monthly for evergreen industries.

Final Thoughts

If you’re doing any kind of B2B outreach and manually searching for contact info, this workflow can help you. It isn’t perfect, some industries hide emails better than others, but a 69% hit rate beats LinkedIn Premium and manual copy-paste.

The dual-strategy approach (website scraping then Google search fallback) is what makes it actually useful. Most scrapers do one or the other. Doing both in sequence is the best way to get all available data.