Job boards list thousands of open positions every day. Manually checking these sites takes time and you might miss great opportunities. Automated job scraping solves this problem, you can collect hundreds of job postings in minutes, filter by salary or location, and track market trends.

Python is one of the best languages for web scraping. It has simple syntax and powerful libraries that make data extraction easy. Even beginners can build working scrapers quickly.

Most modern job sites are heavy and complex. They use JavaScript to load content, implement anti-bot systems, and frequently change their structure. This makes simple scraping difficult. HasData API solves these challenges. It handles JavaScript rendering, rotates proxies automatically, and maintains high success rates. You get clean HTML without dealing with browser automation or getting blocked.

In this guide, you’ll learn how to scrape job postings from any website step by step.

Identify Target Data on Job Boards

Before writing code, you need to understand what data you want to extract and where it’s located on the page.

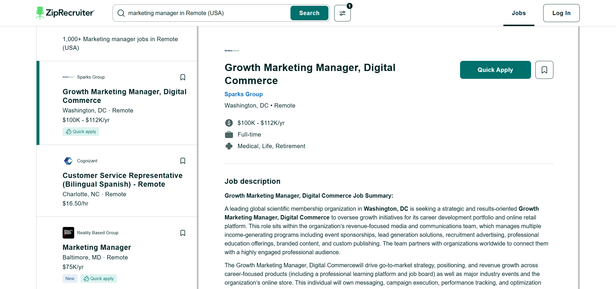

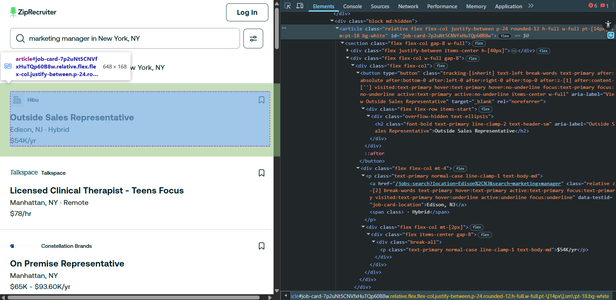

Open ZipRecruiter (or any other board) in your browser and search for a job (for example, “marketing manager” in “New York, NY”). You’ll see a list of job cards, each containing a title, company name, location, and salary.

Right-click on any job title and select “Inspect” (or press F12). This opens Developer Tools showing the HTML structure. You’ll see that each job is wrapped in an `<article>` tag. The title is in an `<h2>` tag, and company name has a special attribute `data-testid="job-card-company"`.

These patterns are called CSS selectors. They tell your code exactly where to find data. For ZipRecruiter, the main selectors are:

| Element | CSS Selector | XPath | Description |

|---|---|---|---|

| Job Cards Container | section > div | //section/div[article] | All job listings wrapper |

| Job Card | article | //article | Individual job listing |

| Job Title | article h2 | //article//h2 | Position title |

| Company Name | a[data-testid=‘job-card-company’] | //a[@data-testid=‘job-card-company’]/text() | Company name (text) |

| Company URL | a[data-testid=‘job-card-company’] | //a[@data-testid=‘job-card-company’]/@href | Link to company page |

| Salary | article p:contains(’$’) | //article//p[contains(text(),’$’)]/text() | Salary or hourly rate |

| Next Page Button | button[title=‘Next Page’] | //button[@title=‘Next Page’] | Next page navigation |

| Previous Page Button | button[title=‘Previous Page’] | //button[@title=‘Previous Page’] | Previous page navigation |

Each selector points to a specific piece of information. With these selectors mapped out, you’re ready to write code that extracts this data automatically.

Environment Setup and Dependencies

Install Python (version 3.13 or higher) and the required libraries. Open your terminal and run:

pip install requests beautifulsoup4These two libraries are all you need:

- `

requests` sends HTTP requests to the HasData API - `

beautifulsoup4` parses the HTML and extracts data

Why HasData API?

ZipRecruiter uses JavaScript to load job listings dynamically. Simple HTTP requests won’t work because you’ll get an empty page, the content loads after the page opens. You need a tool that renders JavaScript like a real browser.

HasData API handles this automatically. It runs a real browser in the cloud, waits for JavaScript to finish loading, and returns the fully rendered HTML. It also rotates proxies from different countries, so you won’t get blocked even when scraping many pages.

Get Your API Key

Sign up at hasdata.com and get your API key from the dashboard. It’s free to start.

Store your API key safely. You’ll use it in every request:

HASDATA_API_KEY = "HASDATA-API-KEY"

HASDATA_API_URL = "https://api.hasdata.com/scrape"That’s it for setup. You’re ready to start scraping.

Implementing the Extraction Logic

The process has three main stages: fetching pages, parsing HTML, and extracting data from each job card.

Fetching Pages with HasData API

First, create a function that fetches rendered HTML through HasData API:

def fetch_page(url):

"""Fetch page through HasData API"""

headers = {

"x-api-key": HASDATA_API_KEY,

"Content-Type": "application/json"

}

payload = {

"url": url,

"proxyType": "residential",

"proxyCountry": "US",

"jsRendering": True,

"blockAds": True,

"outputFormat": ["html"]

}

response = requests.post(HASDATA_API_URL, json=payload, headers=headers)

return response.textThis function sends a POST request to HasData API with the target URL and configuration. The API returns fully rendered HTML with all JavaScript content loaded. Setting `jsRendering: True` ensures dynamic content appears, and `proxyType: "residential"` uses real residential IP addresses to avoid detection.

Parsing Job Cards

Once you have the HTML, use BeautifulSoup to find all job listings:

soup = BeautifulSoup(html, 'html.parser')

job_cards = soup.find_all('article')

print(f"Found {len(job_cards)} jobs")ZipRecruiter wraps each job posting in an `<article>` tag. This selector finds all job cards on the page at once. If you see `0 jobs`, the page didn’t load correctly. If you see a number like `20-40`, you’re successfully finding the cards.

Extracting Data Fields

For each job card, extract all available information:

def extract_job_data(card):

"""Extract all data from a single job card"""

# Job ID

job_id = card.get('id', '').replace('job-card-', '')

# Title

title_elem = card.find('h2')

title = title_elem.get_text(strip=True) if title_elem else 'N/A'

# Company

company_elem = card.find('a', {'data-testid': 'job-card-company'})

company = company_elem.get_text(strip=True) if company_elem else 'N/A'

# Location

location_elem = card.find('a', {'data-testid': 'job-card-location'})

location = location_elem.get_text(strip=True) if location_elem else 'N/A'

# Salary - search paragraphs for dollar signs

salary = 'N/A'

for p in card.find_all('p'):

text = p.get_text(strip=True)

if '$' in text and '/' in text:

salary = text

break

# Company logo

logo_elem = card.find('img')

logo_url = logo_elem.get('src') if logo_elem else None

# Badges

badges = []

if card.find('p', string='New'):

badges.append('New')

if card.find('p', string='Quick apply'):

badges.append('Quick apply')Use `data-testid` attributes when available because they’re more stable than CSS classes. Always check if an element exists before calling `.get_text()` to avoid errors when data is missing. The `strip=True` parameter removes extra whitespace. Return `'N/A'` for missing data to keep your data structure consistent.

For salary, search through all paragraph tags looking for text containing both `$` and `/` to match formats like `$80K/yr` or `$25/hr`. The loop breaks after finding the first match to avoid picking up other dollar amounts.

Data Cleaning and Standardization

Raw scraped data needs cleaning before it’s useful. Convert URLs, separate location components, and structure the output.

Converting Relative to Absolute URLs

Company links on ZipRecruiter are relative URLs that start with `/`. Convert them to complete URLs:

company_url = company_elem.get('href') if company_elem else None

if company_url and not company_url.startswith('http'):

company_url = 'https://www.ziprecruiter.com' + company_urlThis transforms `/co/Acme/Jobs` into `https://www.ziprecruiter.com/co/Acme/Jobs`. Check if the URL already starts with `http` to avoid breaking external links.

Separating Location and Remote Status

Location data includes both city/state and remote status in one field. Parse them separately:

location_elem = card.find('a', {'data-testid': 'job-card-location'})

location = 'N/A'

is_remote = False

if location_elem:

# Get parent element to check for "· Remote" text

location_parent = location_elem.parent

full_text = location_parent.get_text(strip=True)

# Check if remote

is_remote = 'Remote' in full_text

# Get just the city/state

location = location_elem.get_text(strip=True)The location link contains only the city and state like `Austin, TX`. The remote indicator appears as separate text `· Remote` next to it. Checking the parent element’s full text catches this. Now you have both `location: "Austin, TX"` and `is_remote: true` as separate fields, making it easy to filter for remote-only jobs later.

Building the Complete Job Dictionary

Combine all extracted and cleaned data into a structured dictionary:

return {

'job_id': job_id,

'title': title,

'company': company,

'company_url': company_url,

'location': location,

'is_remote': is_remote,

'salary': salary,

'logo_url': logo_url,

'badges': badges

}This structure makes the data easy to filter and analyze. You can search for remote jobs with `is_remote == True`, filter by company, or sort by salary.

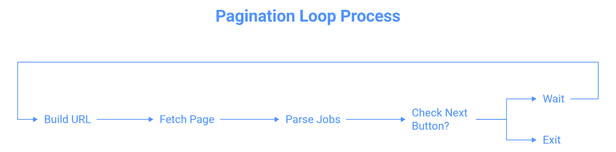

Handling Pagination and Scale

Most searches return multiple pages of results. Loop through pages automatically until you reach the end.

Building Pagination URLs

ZipRecruiter uses a specific URL pattern for pages:

query_formatted = query.replace(' ', '+')

location_formatted = location.replace(' ', '+')

if page == 1:

url = f"https://www.ziprecruiter.com/jobs-search?search={query_formatted}&location={location_formatted}"

else:

url = f"https://www.ziprecruiter.com/jobs-search/{page}?search={query_formatted}&location={location_formatted}"Page 1 uses `/jobs-search?search=...` while pages 2+ use `/jobs-search/2?search=...`. Replace spaces with `+` signs in the query and location parameters.

Example URLs:

- Page 1: `/jobs-search?search=python+developer&location=Remote`

- Page 2: `/jobs-search/2?search=python+developer&location=Remote`

- Page 3: `/jobs-search/3?search=python+developer&location=Remote`

Detecting the Last Page

Check for the next page button to know when to stop:

for page in range(1, max_pages + 1):

# Fetch and parse page...

# Check if more pages exist

next_button = soup.find('a', {'title': 'Next Page'})

if not next_button:

print("No more pages available")

break

# Wait before next request

time.sleep(2)The next page button disappears on the last page. When `find()` returns `None`, stop the loop. Add a 2-second delay with `time.sleep(2)` between requests to be respectful to the server and avoid rate limiting.

Saving Results

After scraping, save the data to a file for later use or analysis.

Exporting to JSON

JSON format preserves the nested structure of your data:

with open('jobs_full.json', 'w', encoding='utf-8') as f:

json.dump(jobs, f, indent=2, ensure_ascii=False)

print(f"Saved {len(jobs)} jobs to jobs_full.json")The `indent=2` parameter makes the file readable. Set `ensure_ascii=False` to properly handle company names with special characters. The resulting file looks like:

[

{

"job_id": "hwgl32RTP2J7yXQ1o5EmTw",

"title": "Software Engineer, Full Stack",

"company": "ZipRecruiter",

"company_url": "https://www.ziprecruiter.com/co/ZipRecruiter/Jobs/--in-Remote?uuid=qWAzEkSdWiDDN9H1IU6DH0KAE20%3D&radius=25",

"location": "Palo Alto, CA",

"is_remote": true,

"salary": "$105K - $145K/yr",

"logo_url": "https://www.ziprecruiter.com/svc/fotomat/public-nosensitive-ziprecruiter-logos/company/314d8bb3.png",

"is_new": false,

"quick_apply": true,

"be_seen_first": false,

"badges": [

"Quick apply"

]

},

…]Exporting to CSV

CSV format works well with Excel and data analysis tools:

import csv

with open('jobs_full.csv', 'w', newline='', encoding='utf-8') as f:

if jobs:

writer = csv.DictWriter(f, fieldnames=jobs[0].keys())

writer.writeheader()

writer.writerows(jobs)

print(f"Saved {len(jobs)} jobs to jobs_full.csv")CSV files flatten nested data. The `badges` list becomes a string like `['New', 'Quick apply']`. Use `newline=''` to avoid extra blank rows on Windows.

Complete Working Job Scraper

Here’s the full scraper that combines all the pieces:

import requests

from bs4 import BeautifulSoup

import json

import time

# Configuration

HASDATA_API_KEY = "HASDATA-API-KEY"

HASDATA_API_URL = "https://api.hasdata.com/scrape/web"

def fetch_page(url):

"""Fetch page through HasData API"""

headers = {

"x-api-key": HASDATA_API_KEY,

"Content-Type": "application/json"

}

payload = {

"url": url,

"proxyType": "residential",

"proxyCountry": "US",

"jsRendering": True,

"blockAds": True,

"outputFormat": ["html"]

}

response = requests.post(HASDATA_API_URL, json=payload, headers=headers)

return response.text

def extract_job_data(card):

"""Extract all data from a single job card"""

# Job ID from article tag

job_id = card.get('id', '').replace('job-card-', '') if card.get('id') else None

# Title

title_elem = card.find('h2')

title = title_elem.get_text(strip=True) if title_elem else 'N/A'

# Company

company_elem = card.find('a', {'data-testid': 'job-card-company'})

company = company_elem.get_text(strip=True) if company_elem else 'N/A'

company_url = company_elem.get('href') if company_elem else None

if company_url and not company_url.startswith('http'):

company_url = 'https://www.ziprecruiter.com' + company_url

# Location and Remote status

location_elem = card.find('a', {'data-testid': 'job-card-location'})

location = 'N/A'

is_remote = False

if location_elem:

# Get the full location text including remote

location_parent = location_elem.parent

full_location_text = location_parent.get_text(strip=True) if location_parent else location_elem.get_text(strip=True)

# Check if remote

is_remote = 'Remote' in full_location_text

# Get just the location without "· Remote"

location = location_elem.get_text(strip=True)

# Salary

salary = 'N/A'

for p in card.find_all('p'):

text = p.get_text(strip=True)

if '$' in text and '/' in text: # Make sure it's salary format

salary = text

break

# Company logo

logo_elem = card.find('img')

logo_url = logo_elem.get('src') if logo_elem else None

# Badges

badges = []

is_new = False

quick_apply = False

be_seen_first = False

# Check for "New" badge

new_badge = card.find('p', string='New')

if new_badge:

is_new = True

badges.append('New')

# Check for "Quick apply" badge

quick_apply_elem = card.find('p', string='Quick apply')

if quick_apply_elem:

quick_apply = True

badges.append('Quick apply')

# Check for "Be Seen First" badge

be_seen_elem = card.find('p', string='Be Seen First')

if be_seen_elem:

be_seen_first = True

badges.append('Be Seen First')

return {

'job_id': job_id,

'title': title,

'company': company,

'company_url': company_url,

'location': location,

'is_remote': is_remote,

'salary': salary,

'logo_url': logo_url,

'is_new': is_new,

'quick_apply': quick_apply,

'be_seen_first': be_seen_first,

'badges': badges

}

def scrape_jobs(query, location, max_pages=3):

"""Scrape multiple pages of job listings"""

all_jobs = []

# Format query and location for URL

query_formatted = query.replace(' ', '+')

location_formatted = location.replace(' ', '+')

for page in range(1, max_pages + 1):

print(f"\n--- Scraping page {page} ---")

# Build URL

if page == 1:

url = f"https://www.ziprecruiter.com/jobs-search?search={query_formatted}&location={location_formatted}"

else:

url = f"https://www.ziprecruiter.com/jobs-search/{page}?search={query_formatted}&location={location_formatted}"

print(f"URL: {url}")

# Fetch page

html = fetch_page(url)

soup = BeautifulSoup(html, 'html.parser')

# Find job cards

job_cards = soup.find_all('article')

print(f"Found {len(job_cards)} jobs")

if len(job_cards) == 0:

print("No jobs found, stopping")

break

# Extract data from each card

for card in job_cards:

job = extract_job_data(card)

all_jobs.append(job)

# Check for next page

next_button = soup.find('a', {'title': 'Next Page'})

if not next_button:

print("No next page button found")

break

# Wait before next page

if page < max_pages:

print("Waiting 2 seconds...")

time.sleep(2)

return all_jobs

def main():

"""Main function"""

print("=" * 60)

print("ZipRecruiter Job Scraper")

print("=" * 60)

# Scrape jobs

jobs = scrape_jobs(

query="python developer",

location="Remote",

max_pages=3

)

# Save to JSON

with open('jobs_full.json', 'w', encoding='utf-8') as f:

json.dump(jobs, f, indent=2, ensure_ascii=False)

print(f"\nSaved {len(jobs)} jobs to jobs_full.json")

# Show summary

print(f"\n--- Summary ---")

print(f"Total jobs: {len(jobs)}")

print(f"Remote jobs: {sum(1 for j in jobs if j['is_remote'])}")

print(f"Jobs with salary: {sum(1 for j in jobs if j['salary'] != 'N/A')}")

print(f"New jobs: {sum(1 for j in jobs if j['is_new'])}")

print(f"Quick apply: {sum(1 for j in jobs if j['quick_apply'])}")

print(f"Be Seen First: {sum(1 for j in jobs if j['be_seen_first'])}")

# Show first job

if jobs:

print(f"\n--- First Job ---")

print(json.dumps(jobs[0], indent=2))

if __name__ == "__main__":

main()Run the scraper to get output like:

============================================================

ZipRecruiter Job Scraper

============================================================

--- Scraping page 1 ---

URL: https://www.ziprecruiter.com/jobs-search?search=python+developer&location=Remote

Found 41 jobs

Waiting 2 seconds...

--- Scraping page 2 ---

URL: https://www.ziprecruiter.com/jobs-search/2?search=python+developer&location=Remote

Found 41 jobs

Waiting 2 seconds...

--- Scraping page 3 ---

URL: https://www.ziprecruiter.com/jobs-search/3?search=python+developer&location=Remote

Found 40 jobs

Saved 122 jobs to jobs_full.json

--- Summary ---

Total jobs: 122

Remote jobs: 96

Jobs with salary: 92

New jobs: 28

Quick apply: 100

Be Seen First: 22

--- First Job ---

{

"job_id": "hwgl32RTP2J7yXQ1o5EmTw",

"title": "Software Engineer, Full Stack",

"company": "ZipRecruiter",

D&radius=25",

"location": "Palo Alto, CA",

"is_remote": true,

"salary": "$105K - $145K/yr",

"logo_url": "https://www.ziprecruiter.com/svc/fotomat/public-nosensitive-ziprecruiter-logos/company/314d8bb3.png",

"is_new": false,

"quick_apply": true,

"be_seen_first": false,

"badges": [

"Quick apply"

]

}The scraper collects jobs from multiple pages, handles pagination automatically, and saves everything to a JSON file ready for analysis.

Common Challenges When Scraping Job Boards

Job sites present unique challenges that go beyond typical web scraping. Here are the main issues you’ll encounter and how to handle them.

JavaScript-Heavy Content

Most modern job boards load listings dynamically with JavaScript. When you fetch a page with basic HTTP requests, you get an empty skeleton without any job data.

The problem. ZipRecruiter, Indeed, and similar sites render content after the page loads. A simple `requests.get()` returns HTML with no job cards visible.

The solution. Use HasData API or browser automation tools like Selenium. These render JavaScript before returning the HTML. HasData handles this automatically - you send a URL and get back fully rendered content with all jobs loaded.

Frequent Layout Changes

Job sites update their HTML structure regularly. A selector that works today might break next week when they redesign a section.

The problem. CSS classes like `.job-card-v2-wrapper` change to `.job-listing-container` after an update. Your scraper stops finding jobs.

The solution. Use `data-testid` attributes when available - sites change these less often. If a selector breaks, check the current HTML structure in DevTools and update your code. Keep selectors simple and specific. Instead of targeting five nested div classes, use unique attributes like `data-testid='job-card-company'`.

Missing Data Fields

Not all job postings include every field. Some lack salary information, others don’t specify if they’re remote.

The problem. Calling `.get_text()` on a `None` element crashes your scraper.

The solution. Always check if an element exists before extracting text:

salary_elem = card.find('p', string=lambda x: x and '$' in x)

salary = salary_elem.get_text(strip=True) if salary_elem else 'N/A'Return `'N/A'` for missing fields instead of `None` or skipping the job entirely. This keeps your data structure consistent and makes it easier to filter results later.

Duplicate Job Listings

Job boards often show the same posting multiple times, once from the company directly and again from recruiting agencies.

The problem. Your dataset contains duplicate jobs with slightly different titles or company names.

The solution. Track job IDs to filter duplicates:

seen_ids = set()

unique_jobs = []

for job in all_jobs:

if job['job_id'] not in seen_ids:

seen_ids.add(job['job_id'])

unique_jobs.append(job)

print(f"Removed {len(all_jobs) - len(unique_jobs)} duplicates")Use the `job_id` from the article tag’s `id` attribute, it’s unique per posting. If no ID exists, create one from the title and company name combined.

Rate Limiting and Blocks

Job sites track how many requests come from each IP address. Too many requests too fast triggers blocks or captchas.

The problem. After scraping 50-100 pages, you start getting empty responses or error pages.

The solution. Add delays between requests and use residential proxies. HasData API rotates proxies automatically, so each request comes from a different IP address. Always wait at least 2 seconds between page requests:

Scrape during off-peak hours when possible. Job sites get most traffic during business hours in their timezone.

Inconsistent Data Formats

Salary, location, and date formats vary between postings. One job shows “$80K - $100K/yr”, another shows “$80,000 - $100,000 per year”.

The problem. Filtering and sorting becomes difficult when salary formats differ.

The solution. Parse text into standardized formats. For salary:

def parse_salary(salary_text):

"""Convert salary text to numbers"""

if not salary_text or salary_text == 'N/A':

return None

# Remove commas and spaces

text = salary_text.replace(',', '').replace(' ', '')

# Find numbers

numbers = re.findall(r'\d+', text)

if not numbers:

return None

# Check if yearly or hourly

multiplier = 1000 if 'K' in text else 1

return {

'min': int(numbers[0]) * multiplier,

'max': int(numbers[1]) * multiplier if len(numbers) > 1 else None,

'period': 'yearly' if '/yr' in text else 'hourly'

}Now you can filter jobs by minimum salary or calculate averages across postings.

Expired or Filled Positions

Job boards keep old listings online even after positions are filled. You might scrape jobs that are no longer accepting applications.

The problem. Your dataset includes outdated jobs that waste time when applying.

The solution. Filter by the “New” badge or posting date when available. Some sites show “Posted 2 days ago” text that you can parse. Set a maximum age threshold.

Check for “actively recruiting” or “urgently hiring” badges that indicate the position is still open.

Legal Considerations for Job Scraping

Job boards contain publicly posted information, but scraping them still has legal boundaries. Scraping job postings for personal job search or internal business analysis is typically acceptable. You’re automating what you could do manually by browsing listings.

What you can do:

- Scrape for personal job search and tracking applications

- Collect data for internal salary research or market analysis

- Monitor competitor hiring patterns for business intelligence

What you should not do:

- Republish scraped job listings on your own website

- Sell scraped job data to third parties

- Scrape or store personal information about job seekers

Before scraping, check the site’s robots.txt file and read their terms of service. Use delays between requests and scrape during off-peak hours. If you’re sharing insights publicly, aggregate the data and remove identifying details. You can say “Python developer salaries average $120K” without republishing specific listings.

If you’re unsure about what’s allowed, contact the job board or check if they offer an official API.

Conclusion

You’ve learned how to scrape job boards by building a complete ZipRecruiter scraper. The techniques you used here apply to any job site. Find the container elements holding job cards, identify the selectors for title, company, location, and salary, then loop through pages using their pagination structure.

Every job board works the same way. They all display listings in cards or rows, use similar data fields, and paginate results. The HTML structure differs, but the extraction process stays identical. Inspect the page, map the selectors, extract the data, handle pagination.

Use this scraper as a template. When you need data from Indeed, LinkedIn, or any other job board, follow the same steps. Check if they use JavaScript rendering and choose HasData API or basic requests accordingly. Build the scraper incrementally, test with one page first, then scale to multiple pages.