Redfin is one of the largest online real estate platforms in the U.S., ranking fifth among top brokers by revenue. It offers data ranging from property listings for sale and rent to market insights and convenient search tools.

In this article, we’ll guide you through various methods to gather data from Redfin. We’ll explore building a custom Python scraper with Beautiful Soup, introduce a quick trick for retrieving data directly from Redfin’s JSON using Selenium, and show you how to create a scraper using the Redfin API.

Scrape Redfin Property Data with Beautiful Soup

Let’s start with a Python script without using any extra tools or APIs.

Full Redfin Scraper using Beautiful Soup

If you’re looking for a ready-to-use script without diving into the details, here it is:

import requests

from bs4 import BeautifulSoup

import csv

url = "https://www.redfin.com/city/18142/FL/Tampa"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/129.0.0.0 Safari/537.36"

}

response = requests.get(url, headers=headers)

properties = []

if response.status_code == 200:

soup = BeautifulSoup(response.content, "html.parser")

homecards = soup.find_all("div", class_="HomeCardContainer")

for card in homecards:

if card.find("a", class_="link-and-anchor"):

link = card.find("a", class_="link-and-anchor")["href"]

full_link = "https://www.redfin.com" + link

price = card.find("span", class_="bp-Homecard__Price--value").text.strip()

beds = card.find("span", class_="bp-Homecard__Stats--beds").text.strip()

baths = card.find("span", class_="bp-Homecard__Stats--baths").text.strip()

address = card.find("div", class_="bp-Homecard__Address").text.strip()

print("Link:", full_link)

print("Price:", price)

print("Beds:", beds)

print("Baths:", baths)

print("Address:", address)

print()

properties.append({

"Price": price,

"Beds": beds,

"Baths": baths,

"Address": address,

"Link": full_link

})

csv_filename = "redfin_properties.csv"

with open(csv_filename, mode="w", newline="", encoding="utf-8") as file:

writer = csv.DictWriter(file, fieldnames=["Price", "Beds", "Baths", "Address", "Link"])

writer.writeheader()

writer.writerows(properties)We’ve also uploaded it to Google Colab, so you can easily run it right away.

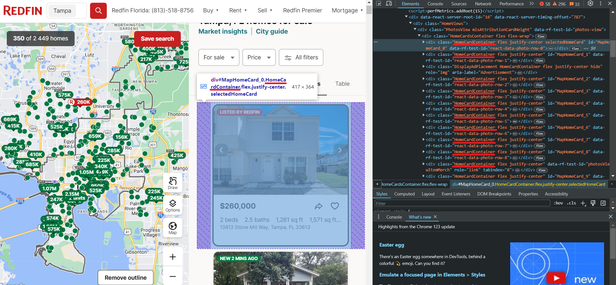

Inspect Redfin Page

Before scraping data, let’s research the specific data we can extract from Redfin. To do this, we’ll navigate to the website and open DevTools (F12 or right-click and Inspect) to immediately identify the selectors for the elements we’re interested in. We’ll need this information later. First, let’s examine the listings page.

As we can see, the properties are located in containers with the div tag and have the class HomeCardContainer. This means that to get all the ads on the page, it will be enough to get all the elements with this tag and class.

Now let’s take a closer look at one property. Since all the elements on the page have the same structure, what works for one element will work for all the others.

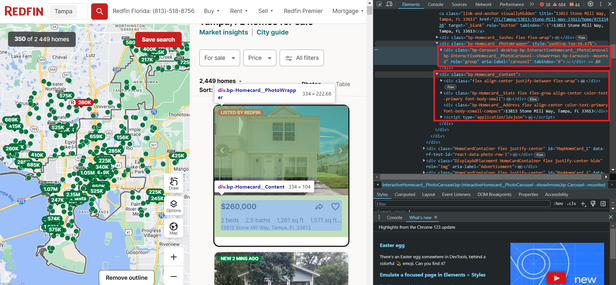

As you can see, the data is stored in two blocks. The first block contains the images for the listing, while the second block holds the textual data, including information about the price, number of rooms, amenities, address, and more. Below is a breakdown of the CSS selectors used to locate each specific data point on the page:

| Data Field | CSS Selector |

|---|---|

| Property Card | div.HomeCardContainer |

| Listing Link | a.link-and-anchor |

| Price | span.bp-Homecard__Price—value |

| Beds | span.bp-Homecard__Stats—beds |

| Baths | span.bp-Homecard__Stats—baths |

| Address | div.bp-Homecard__Address |

Each selector targets a specific HTML element on the Redfin property page.

Notice the script tag, which contains all this textual data neatly structured in JSON format. However, to extract this data, you’ll need to use libraries that support headless browsers, such as Selenium, Pyppeteer, or Playwright.

Setting Up Your Development Environment

Now, let’s set up the necessary libraries to create our own scraper. In this article, we will be using Python version 3.12.2. You can read about how to install Python and how to use a virtual environment in our article on the introduction to scraping with Python. Next, install the required libraries:

pip install requests beautifulsoup4 seleniumTo use Selenium, you may need to additionally download a webdriver. However, this is no longer necessary for the latest Selenium versions. If you are using an earlier version of the library, you can find all the essential links in the article on scraping with Selenium. You can also choose any other library to work with a headless browser.

Get Data with Requests and BS4

You can view the final version of the script in Google Colaboratory, where you can also run it. Create a file with the extension *.py where we will write our script. Now, let’s import the necessary libraries.

import requests

from bs4 import BeautifulSoup

import csvCreate a variable to store the URL of the listings page:

url = "https://www.redfin.com/city/18142/FL/Tampa"To avoid an error while scraping, let’s add User-Agents to our request. For more information on what User-Agents are, why they’re essential, and a list of latest User-Agents, please refer to our other article.

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/129.0.0.0 Safari/537.36"

}

response = requests.get(url, headers=headers)The request is now working properly, and the website is returning property data. Let’s verify that the response status code is 200, indicating successful execution, and then parse the result:

if response.status_code == 200:

soup = BeautifulSoup(response.content, "html.parser")Now, let’s utilize the selectors we discussed in the previous section to retrieve the required data:

homecards = soup.find_all("div", class_="bp-Homecard__Content")

for card in homecards:

if (card.find("a", class_="link-and-anchor")):

link = card.find("a", class_="link-and-anchor")["href"]

full_link = "https://www.redfin.com" + link

price = card.find("span", class_="bp-Homecard__Price--value").text.strip()

beds = card.find("span", class_="bp-Homecard__Stats--beds").text.strip()

baths = card.find("span", class_="bp-Homecard__Stats--baths").text.strip()

address = card.find("div", class_="bp-Homecard__Address").text.strip()Print them on the screen:

print("Link:", full_link)

print("Price:", price)

print("Beds:", beds)

print("Baths:", baths)

print("Address:", address)

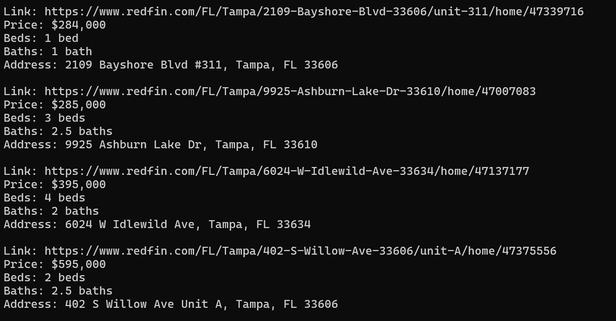

print()As a result, we will have 40 real estate objects with all the data we need in the convenient format:

If you want to save them, create a variable at the beginning of the script to store them:

properties = []Instead of printing the data to the screen, store it in a variable:

properties.append({

"Price": price,

"Beds": beds,

"Baths": baths,

"Address": address,

"Link": full_link

})The next step depends on the format in which you want to save the data. Let’s save it to a CSV file:

csv_filename = "redfin_properties.csv"

with open(csv_filename, mode="w", newline="", encoding="utf-8") as file:

writer = csv.DictWriter(file, fieldnames=["Price", "Beds", "Baths", "Address", "Link"])

writer.writeheader()

writer.writerows(properties)This method lets you gather data in a way that may feel more familiar but is also more time-consuming.

Parse Redfin JSON using Selenium

Let’s do the same thing in an easier way using Selenium.

Full Redfin Scraper using Selenium

Before we dive into the details, here’s the ready-to-use code for those who aren’t too interested in the process itself:

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.chrome.options import Options

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

import json

chrome_options = Options()

driver = webdriver.Chrome(options=chrome_options)

url = "https://www.redfin.com/city/18142/FL/Tampa"

try:

driver.get(url)

WebDriverWait(driver, 10).until(EC.presence_of_element_located((By.CLASS_NAME, "HomeCardContainer")))

homecards = driver.find_elements(By.CLASS_NAME, "HomeCardContainer")

properties = []

for card in homecards:

try:

script_elements = card.find_elements(By.XPATH, ".//script[@type='application/ld+json']")

for script in script_elements:

json_data = json.loads(script.get_attribute("innerHTML"))

if isinstance(json_data, list):

properties.extend(json_data)

else:

properties.append(json_data)

except Exception as e:

print(f"Error: {e}")

with open("properties.json", "w") as json_file:

json.dump(properties, json_file, indent=4)

finally:

driver.quit()We’ve also uploaded this script to the Colab Research page, but you can only run it on your own PC, as Google Colaboratory doesn’t allow running headless browsers.

Developing a Redfin Scraper

This time, instead of using selectors and selecting all the elements individually, let’s use the JSON that we saw earlier and combine them all into one file. You can also remove unnecessary attributes or create your own JSON with your own structure, using only the necessary attributes.

Let’s create a new file and import all the necessary Selenium modules, as well as the library for JSON processing.

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

from selenium.webdriver.common.by import By

from selenium.webdriver.chrome.options import Options

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

import jsonNow let’s set the Selenium parameters, specify the path to the webdriver, and also indicate the headless mode:

chrome_options = Options()

driver = webdriver.Chrome(options=chrome_options)Provide a link to the page with the listing:

url = "https://www.redfin.com/city/18142/FL/Tampa"Let’s move to the properties page and to prevent the script from terminating in case of an error, add a try block:

try:

driver.get(url)

WebDriverWait(driver, 10).until(EC.presence_of_element_located((By.CLASS_NAME, "HomeCardContainer")))

homecards = driver.find_elements(By.CLASS_NAME, "HomeCardContainer")

properties = []Iterate over all product cards and collect JSON:

for card in homecards:

try:

script_elements = card.find_elements(By.XPATH, ".//script[@type='application/ld+json']")

for script in script_elements:

json_data = json.loads(script.get_attribute("innerHTML"))

if isinstance(json_data, list):

properties.extend(json_data)

else:

properties.append(json_data)

except Exception as e:

print(f"Error: {e}")Next, you can either continue processing the JSON object or save it. At the end, we should stop the web driver work:

finally:

driver.quit()To save the result:

with open("properties.json", "w") as json_file:

json.dump(properties, json_file, indent=4)This is an example of a JSON file that we will obtain as a result:

[

{

"@context": "http://schema.org",

"name": "13813 Stone Mill Way, Tampa, FL 33613",

"url": "https://www.redfin.com/FL/Tampa/13813-Stone-Mill-Way-33613/home/47115426",

"address": {

"@type": "PostalAddress",

"streetAddress": "13813 Stone Mill Way",

"addressLocality": "Tampa",

"addressRegion": "FL",

"postalCode": "33613",

"addressCountry": "US"

},

"geo": {

"@type": "GeoCoordinates",

"latitude": 28.0712008,

"longitude": -82.4771742

},

"numberOfRooms": 2,

"floorSize": {

"@type": "QuantitativeValue",

"value": 1261,

"unitCode": "FTK"

},

"@type": "SingleFamilyResidence"

},

…

]This approach allows you to get more complete data in a simpler way. In addition, using a headless browser can reduce the risk of being blocked, as it allows you to better simulate the behavior of a real user.

However, it is worth remembering that if you scrape a large amount of data, the site may still block you. To avoid this, connect a proxy to your script. We have already discussed how to do this in a separate article dedicated to proxies.

Scrape Redfin using API

Alright, if you’re like me and don’t want to deal with buying proxies or setting up captcha-solving services, here’s a simple solution. Redfin doesn’t offer an official API, so we’ll use HasData’s Redfin API instead. It returns all the data in a clean JSON format. Check out the full details in the docs.

Scrape Listings using Redfin API

Since this API has two endpoints, let’s consider both options and start with the one that returns data with a listing, as we have already collected this data using Python libraries. You can also find the ready-made script in Google Colaboratory.

Import the necessary libraries:

import requests

import jsonThen, put your HasData API key:

api_key = "YOUR-API-KEY"Set parameters:

params = {

"keyword": "33321",

"type": "forSale"

}In addition to the ZIP code and property type, you can also specify the number of pages. Then provide the endpoint itself:

url = "https://api.hasdata.com/scrape/redfin/listing"Set headers and request the API:

headers = {

"x-api-key": api_key

}

response = requests.get(url, params=params, headers=headers)Next, we will retrieve the data, and if the response code is 200, we can either further process the received data or save it:

if response.status_code == 200:

properties = response.json()

if properties:

# Here you can process or save properties

else:

print("No listings found.")

else:

print("Failed to retrieve listings. Status code:", response.status_code)As a result, we will get a response where the data is stored in the following format (most of the data has been removed for clarity):

{

"requestMetadata": {

"id": "da2f6f99-d6cf-442d-b388-7d5a643e8042",

"status": "ok",

"url": "https://redfin.com/zipcode/33321"

},

"searchInformation": {

"totalResults": 350

},

"properties": [

{

"id": 186541913,

"mlsId": "F10430049",

"propertyId": 41970890,

"url": "https://www.redfin.com/FL/Tamarac/7952-Exeter-Blvd-W-33321/unit-101/home/41970890",

"price": 397900,

"address": {

"street": "7952 W Exeter Blvd W #101",

"city": "Tamarac",

"state": "FL",

"zipcode": "33321"

},

"propertyType": "Condo",

"beds": 2,

"baths": 2,

"area": 1692,

"latitude": 26.2248143,

"longitude": -80.2916508,

"description": "Welcome Home to this rarely available & highly sought-after villa in the active 55+ community of Kings Point, Tamarac! This beautiful and spacious villa features volume ceilings…",

"photos": [

"https://ssl.cdn-redfin.com/photo/107/islphoto/049/genIslnoResize.F10430049_0.jpg",

"https://ssl.cdn-redfin.com/photo/107/islphoto/049/genIslnoResize.F10430049_1_1.jpg"

]

},

…

}As you can see, using an API to retrieve data is much easier and faster. Additionally, this approach allows you to get all the possible data from the page without having to extract it manually.

Scrape Properties using Redfin API

Now, let’s collect data using another endpoint (result in Google Colaboratory) that allows you to retrieve data from the page of a specific property. This can be useful if, for example, you have a list of listings and you need to collect data from all of them quickly.

The entire script will be very similar to the previous one, with only the request parameters and the endpoint changing. Here is the resulting code:

import requests

import json

api_key = "YOUR-API-KEY"

params = {

"url": "https://www.redfin.com/IL/Chicago/1322-S-Prairie-Ave-60605/unit-1106/home/12694628"

}

url = "https://api.scrape-it.cloud/scrape/redfin/property"

headers = {

"x-api-key": api_key

}

response = requests.get(url, params=params, headers=headers)

if response.status_code == 200:

properties = response.json()

else:

print("Failed to retrieve listings. Status code:", response.status_code)

with open("properties.json", "w") as json_file:

json.dump(properties, json_file, indent=4)The API will return all available data for the specified property:

{

"requestMetadata": {

"id": "03278480-307e-4ad6-907e-8482e6bc99ae",

"status": "ok",

"html": "https://f005.backblazeb2.com/file/hasdata-screenshots/03278480-307e-4ad6-907e-8482e6bc99ae.html",

"url": "https://www.redfin.com/IL/Chicago/1322-S-Prairie-Ave-60605/unit-1106/home/12694628"

},

"property": {

"id": 184291096,

"propertyId": 12694628,

"url": "https://www.redfin.com/IL/Chicago/1322-S-Prairie-Ave-60605/unit-1106/home/12694628",

"image": "https://ssl.cdn-redfin.com/photo/68/bigphoto/528/12010528_0.jpg",

"status": "sold",

"price": 465000,

"address": {

"street": "1322 S Prairie Ave #1106",

"city": "Chicago",

"state": "IL",

"zipcode": "60605"

},

"beds": 2,

"baths": 2,

"area": 1170,

"latitude": 41.8651368,

"longitude": -87.6216764,

"yearBuilt": 2002,

"homeType": "Condo/Co-op",

"description": "Stunning 2 bd/2 bth 11th floor condo with amazing views of the lake & Field Museum. As you enter the unit you're greeted by gorgeous hardwood floors and floor-to-ceiling windows providing tons of natural light and lake views from your couch or private balcony. The open floor plan living area is generous in size. The kitchen features all SS appliances, granite counters and a breakfast bar. The spacious Primary suite has it's own en-suite complete with double sinks, soaking tub and separate shower and a massive walk-in closet. In-unit laundry! The perks of this condo are in the full amenity building including outdoor pool, rooftop sun deck, party room, exercise room, 24 hour security, clubhouse with a grocery store & dry cleaners & doorman and 1 assigned park space plus a walking path and dog park right behind the building. Close to all the action, you are minutes from shopping, restaurants, nightlife, museums, beaches and more. You truly can't beat a condo in Museum Park Tower I! Schedule your tour today. This one won't last long! ",

"brokerName": "Redfin Corporation",

"brokerPhoneNumber": "224-699-5002",

"agentName": "Cesar Juarez",

"agentPhoneNumber": "773-960-7836",

"photos": [

"https://ssl.cdn-redfin.com/photo/68/bigphoto/528/12010528_0.jpg",

"https://ssl.cdn-redfin.com/photo/68/bigphoto/528/12010528_1_0.jpg",

"https://ssl.cdn-redfin.com/photo/68/bigphoto/528/12010528_2_0.jpg"

]

}

}Using the API not only saves you from dealing with proxies and bypassing CAPTCHAs, but it also frees you from constantly updating your code. Even if Redfin changes its structure, you won’t have to worry about it. The API handles those changes for you, and all you get are the ready-to-use data in a consistent format, which doesn’t change. From my own experience, this makes life a lot easier in the long run.

Scrape Data from Redfin Without Code

If you don’t want to deal with coding at all, we’ve got you covered with a simple no-code option. Plus, it’s a safe option since the scraping happens on the service’s side, not your PC. You just get the data, keeping you safe from any potential blocks by Redfin.

Let’s take a closer look at how to use such tools using the example of HasData’s Redfin no-code scraper. To do this, sign up on our website and go to your account.

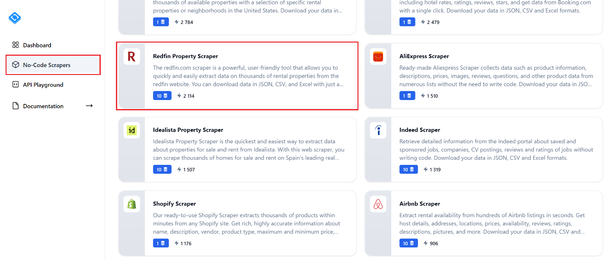

Navigate to the “No-Code Scrapers” tab and locate the “Redfin Property Scraper”. Click on it to proceed to the scraper’s page.

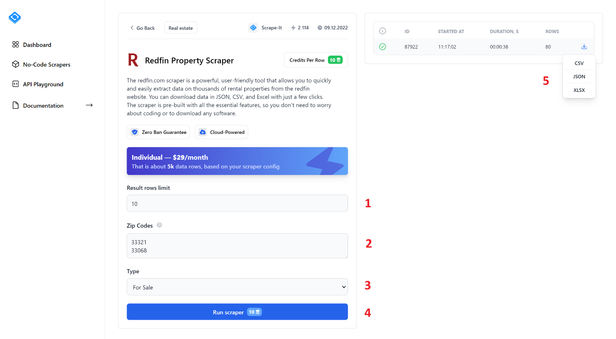

To extract data, fill in all fields and click the “Run Scraper” button. Let’s take a closer look at the no-code scraper elements:

- Result rows limit. Specify the number of listings you want to scrape.

- Zip Codes. Enter the zip codes from which you want to extract data. You can enter multiple Zip Codes, each on a new line.

- Type. Select the listing type: For Sale, For Rent, or Sold.

- Run Scraper. Click this button to start the scraping process.

- After starting the scraper, you will see the progress bar and scraping results in the right-hand pane. Once finished, you can download the results in one of the available formats: CSV, JSON, or XLSX.

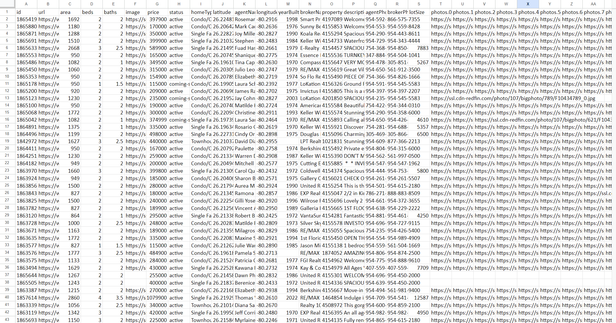

As a result, you will receive a file containing all the data that was collected based on your request. For example, the result may look like this:

The screenshot only shows a few of the columns, as there is a large amount of data. But you can find a full example on the Redfin Scraper page. Overall, using no-code web scraping tools can significantly streamline the process of collecting data from websites, making it accessible to a wide range of users without requiring coding expertise.

Conclusion

In this article, we’ve covered the most popular methods for gathering real estate data from Redfin, using tools like Beautiful Soup and Selenium to easily extract details like prices, addresses, and property features. You can also check out our Zillow scraping tutorial for more real estate insights.

All scripts are available in Google Colaboratory, so you can run them without installing Python (except the headless browser example, which doesn’t work in the cloud).