CSS selectors are patterns that match elements in an HTML document by tag name, class, ID, attributes, or position in the DOM. Originally designed for stylesheets, the same selector syntax drives web scraping and test automation. Any tool that reads HTML uses it.

Every time I scrape a new site, the first thing I reach for is a CSS selector. Not XPath, not regex. But I kept hitting edge cases: attribute matching quirks, pseudo-class behavior differences across scraping libraries, and dynamic class names that break overnight.

This CSS selectors cheat sheet covers every selector type, from CSS3 basics like #id and .class to Selectors Level 4 pseudo-classes like :has() and :is(), with code examples for BeautifulSoup, Scrapy, Selenium, Playwright, and PHP:

- 5 quick-reference tables covering all CSS selector categories with syntax and examples

- Python and PHP code for BeautifulSoup, Scrapy, Selenium, Playwright, and Symfony’s

css-selector - Modern CSS4 selectors (

:has(),:is(),:where()) that most cheat sheets still ignore - A library support comparison table showing which selectors work in which tool

- Resilient selector patterns for handling dynamic class names in React and Vue sites

- A DevTools workflow for finding and testing selectors before writing any code

Prerequisites: basic HTML knowledge and Python 3.8+. For the scraping examples, install BeautifulSoup with pip install beautifulsoup4 requests.

from bs4 import BeautifulSoup

import requests

response = requests.get("https://books.toscrape.com/", timeout=10)

soup = BeautifulSoup(response.text, "html.parser")

# Select all book titles using a CSS selector

titles = soup.select("article.product_pod h3 a")

for title in titles[:5]:

print(title["title"])A Light in the Attic

Tipping the Velvet

Soumission

Sharp Objects

Sapiens: A Brief History of HumankindQuick-reference CSS selectors cheat sheet

Basic selectors

These are the selectors you’ll use in 90% of scraping tasks. The * universal selector is rarely useful for data extraction, but the other four are essential.

| Selector | Syntax | Example | What it matches |

|---|---|---|---|

| Universal | * | * | Every element on the page |

| Type (tag) | element | p | All <p> elements |

| Class | .classname | .price | All elements with class="price" |

| ID | #idname | #nav | The element with id="nav" |

| Grouping | sel1, sel2 | h1, h2, h3 | All <h1>, <h2>, and <h3> elements |

Using #id selectors for scraping product listings is a common mistake. IDs are unique per page. They work for single elements like a page title, not for repeated items like product cards. Use .class or attribute selectors for those.

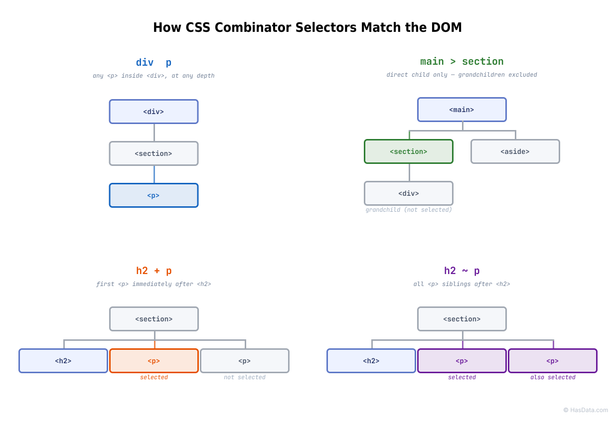

Combinator selectors

Combinators describe the relationship between two selectors. The descendant selector (space) is the most common in scraping. It lets you drill into nested HTML without specifying every intermediate tag.

| Combinator | Syntax | Example | What it matches |

|---|---|---|---|

| Descendant | A B | div p | All <p> inside any <div> (any nesting depth) |

| Child | A > B | ul > li | Only direct <li> children of <ul> |

| Adjacent sibling | A + B | h2 + p | The first <p> immediately after an <h2> |

| General sibling | A ~ B | h2 ~ p | All <p> elements after an <h2> at the same level |

Using div > p (child combinator) breaks when the <p> is actually nested two levels deep inside a wrapper <span>. If your selector returns nothing, switch from > to a space (descendant) and narrow down from there.

Attribute selectors

Attribute selectors target what class names don’t cover: data-* attributes, href values, and custom properties.

| Selector | Syntax | Example | What it matches |

|---|---|---|---|

| Has attribute | [attr] | [href] | Elements that have an href attribute |

| Exact value | [attr=val] | [type="text"] | Elements where type is exactly "text" |

| Starts with | [attr^=val] | [href^="/product"] | Links starting with /product |

| Ends with | [attr$=val] | [href$=".pdf"] | Links ending with .pdf |

| Contains | [attr*=val] | [class*="price"] | Elements with "price" anywhere in class |

| Space-separated | [attr~=val] | [class~="featured"] | Elements with "featured" as a whole word in class |

| Hyphen-separated | [attr|=val] | [lang|="en"] | Elements where lang is "en" or starts with "en-" |

The [attr*=val] (contains) selector is the one I rely on most for scraping. When a site uses dynamic class names like price_a1b2c3, the selector [class*="price"] catches them all regardless of the hash suffix. I’ll show more resilient selector strategies later in this article.

Pseudo-class selectors

Pseudo-classes filter elements by their state or position in the Document Object Model (DOM). I use :nth-child(), :first-child, and :not() constantly for filtering scraped data. The rest are situational. The :has(), :is(), and :where() pseudo-classes come from Selectors Level 4 (often called “CSS4” informally, though W3C no longer versions CSS as a whole). Most cheat sheets still miss them. Note that :is() and :where() differ only in specificity: :is() takes the specificity of its most specific argument, while :where() always has zero specificity. This distinction matters in stylesheets but not in scraping or automation.

| Selector | Syntax | Example | What it matches |

|---|---|---|---|

| First child | :first-child | li:first-child | The first <li> in its parent |

| Last child | :last-child | li:last-child | The last <li> in its parent |

| Nth child | :nth-child(n) | tr:nth-child(2n) | Even-numbered table rows |

| Nth last child | :nth-last-child(n) | li:nth-last-child(1) | The last <li> (counting from the end) |

| Nth of type | :nth-of-type(n) | p:nth-of-type(2) | The second <p> among its siblings (ignores other tags) |

| Not | :not(sel) | div:not(.ad) | All <div> elements except those with class ad |

| Empty | :empty | td:empty | Table cells with no content |

| First of type | :first-of-type | p:first-of-type | The first <p> among its siblings |

| Hover | :hover | a:hover | Links being hovered (browser automation only) |

| Focus | :focus | input:focus | The currently focused element (browser automation only) |

| Checked | :checked | input:checked | Checked checkboxes or radio buttons |

| Has (CSS4) | :has(sel) | div:has(> img) | <div> elements containing a direct child <img> |

| Is (CSS4) | :is(sel) | :is(.sidebar, .content) p | All <p> inside .sidebar or .content (forgiving selector list) |

| Where (CSS4) | :where(sel) | :where(.a, .b) p | Like :is() but with zero specificity |

The :has() selector fills the biggest gap CSS had for scraping. Before :has(), CSS had no way to select a parent based on its children. If you needed “the <div> that contains a price,” you had to use XPath’s //div[.//span[@class='price']]. Now div:has(span.price) does the same thing in CSS.

:has() works in all modern browsers, in Python’s soupsieve library (BeautifulSoup 4.7+), and in Scrapy’s parsel via cssselect 1.2.0+ (October 2022). One caveat: cssselect doesn’t allow nesting :has() inside :has() or :not() inside :has(). Those patterns will raise SelectorSyntaxError.

Pseudo-element selectors

Pseudo-elements target specific parts of an element rather than the element itself. They’re less common in scraping because ::before and ::after content is CSS-generated and doesn’t appear in the raw HTML.

| Selector | Syntax | Example | What it targets |

|---|---|---|---|

| Before | ::before | p::before | Generated content before <p> |

| After | ::after | p::after | Generated content after <p> |

| First line | ::first-line | p::first-line | The first rendered line of <p> |

| First letter | ::first-letter | p::first-letter | The first letter of <p> |

| Placeholder | ::placeholder | input::placeholder | Placeholder text in input fields |

| Selection | ::selection | ::selection | User-highlighted text |

If a site renders prices or labels via ::before / ::after CSS content properties, your HTTP-based scraper won’t see them. That content only exists in the browser’s rendered DOM. You’ll need a headless browser like Playwright or Selenium to access it via JavaScript’s getComputedStyle().

CSS selector support by library

Not every selector works in every tool. Browser-based tools (Selenium, Playwright) support everything the browser does. Parsing libraries have gaps.

| Feature | BeautifulSoup 4.7+ | Scrapy (parsel) | Selenium | Playwright | PHP (symfony/css-selector) |

|---|---|---|---|---|---|

| Basic, combinator, attribute | ✅ | ✅ | ✅ | ✅ | ✅ |

:nth-child(), :not() | ✅ | ✅ | ✅ | ✅ | ✅ |

:has() | ✅ (soupsieve) | ✅ (cssselect 1.2.0+) | ✅ | ✅ | ❌ |

:is(), :where() | ✅ (soupsieve) | ❌ | ✅ | ✅ | ❌ |

::text, ::attr() | ❌ | ✅ (parsel extension) | ❌ | ❌ | ❌ |

Check this table before writing selectors for a specific library. A :has() selector that works in BeautifulSoup will fail silently or throw an error in Symfony’s CssSelectorConverter.

How to use CSS selectors for web scraping

The selector string is the same across Python and PHP libraries. In Python, use soup.select("div.price") in BeautifulSoup, response.css("div.price::text") in Scrapy, or driver.find_element(By.CSS_SELECTOR, "div.price") in Selenium. In PHP, Symfony’s css-selector package converts the same CSS string to XPath. The difference is how each library returns and processes the matched elements.

Finding selectors with Chrome DevTools

Before writing any scraping code, I always test selectors in the browser first. Three steps:

- Right-click the element you want and select Inspect (or press

Ctrl+Shift+Cto activate element picker mode) - Examine the HTML structure. Note the tag name, classes, and any

data-*attributes - Test your selector in Console. Press

Escto open the console drawer, then run:

// Returns all matching elements as an array

// $$() is a DevTools shorthand for document.querySelectorAll()

$$("article.product_pod h3 a")

// Returns just the first match (same as document.querySelector())

$("article.product_pod h3 a")

// Count matches to verify you're getting the expected number

$$("article.product_pod h3 a").lengthDon’t rely on Chrome’s Copy → Copy selector feature for scraping. It generates brittle paths like #default > div > div > div:nth-child(1) > ol > li:nth-child(1) > article > h3 > a that break the moment a page layout changes. Write your own selector targeting stable attributes instead. The MDN CSS Selectors reference is useful when you need to double-check syntax for less common selectors.

BeautifulSoup: soup.select() and soup.select_one()

BeautifulSoup’s select() method accepts any CSS selector string and returns a list of matching Tag objects. select_one() returns only the first match or None if nothing matches.

from bs4 import BeautifulSoup

import requests

response = requests.get("https://books.toscrape.com/", timeout=10)

soup = BeautifulSoup(response.text, "html.parser")

# Select all product cards on the page

books = soup.select("article.product_pod")

for book in books[:3]:

# select_one() scopes the search within each card

title = book.select_one("h3 a")["title"]

price = book.select_one(".price_color").text

availability = book.select_one(".availability").text.strip()

print(f"{title} - {price} - {availability}")A Light in the Attic - £51.77 - In stock

Tipping the Velvet - £53.74 - In stock

Soumission - £50.10 - In stockHere’s how selector categories from the cheat sheet map to common BeautifulSoup patterns:

# Attribute selector - all links pointing to product pages

product_links = soup.select('a[href^="catalogue/"]')

# Combinator - prices inside product cards only (skip sidebar prices)

card_prices = soup.select("article.product_pod .price_color")

# Pseudo-class - first book in the list

first_book = soup.select_one("article.product_pod:first-child h3 a")

# :not() with complex selector - requires Selectors Level 4 (soupsieve supports it)

content_links = soup.select("a:not(nav a)")

# CSS4 :has() - divs that contain a direct child image (BeautifulSoup 4.7+)

image_containers = soup.select("div:has(> img)")One thing that tripped me up early on: soup.select(".price_color") returns Tag objects, not strings. You need .text or .get_text() to extract visible text, and ["href"] or .get("href") for attribute values. Calling ["href"] on a tag without that attribute raises a KeyError. Use .get("href") for safer access when the attribute might be missing. The same applies to select_one(): it returns None when nothing matches, so calling .text on the result without a check raises AttributeError. In production code, always guard with if element: or use a pattern like element.text if element else None.

Scrapy CSS selectors (response.css())

Scrapy uses the parsel library under the hood, which adds two pseudo-element extensions you won’t find in browser CSS: ::text extracts text content and ::attr(name) extracts an attribute value.

import scrapy

class BookSpider(scrapy.Spider):

name = "books"

start_urls = ["https://books.toscrape.com/"]

def parse(self, response):

for book in response.css("article.product_pod"):

yield {

# ::attr() extracts an attribute value directly

"title": book.css("h3 a::attr(title)").get(),

"url": book.css("h3 a::attr(href)").get(),

# ::text extracts the text content of the element

"price": book.css(".price_color::text").get(),

}The key difference from BeautifulSoup: ::text and ::attr() are parsel extensions, not standard CSS. Writing soup.select("h3::text") in BeautifulSoup raises a SelectorSyntaxError. Use the .text property on Tag objects instead.

Scrapy also supports chaining CSS selectors with .css() calls and combining CSS with XPath via .xpath(). Here’s a more complete spider that follows pagination links:

import scrapy

class BookSpiderPaginated(scrapy.Spider):

name = "books_paginated"

start_urls = ["https://books.toscrape.com/"]

def parse(self, response):

for book in response.css("article.product_pod"):

# Combine ::attr() with attribute selectors

star_class = book.css("p.star-rating::attr(class)").get()

rating = star_class.split()[-1] if star_class else "Unknown"

yield {

"title": book.css("h3 a::attr(title)").get(),

"price": book.css(".price_color::text").get(),

"rating": rating,

# SelectorList is truthy when non-empty, falsy when empty

"in_stock": bool(book.css(".availability .icon-ok")),

}

# Follow the "next" pagination link if it exists

next_page = response.css("li.next a::attr(href)").get()

if next_page:

yield response.follow(next_page, callback=self.parse)This pattern (extract data + follow pagination) is the most common Scrapy workflow. The response.follow() method handles relative URLs automatically, so you don’t need urljoin().

Using CSS selectors in PHP

PHP’s DOMXPath doesn’t support CSS selectors natively, but the symfony/css-selector package converts CSS to XPath behind the scenes. Install it with composer require symfony/css-selector.

<?php

require 'vendor/autoload.php';

use Symfony\Component\CssSelector\CssSelectorConverter;

// Requires allow_url_fopen=On in php.ini; use cURL if disabled

$html = file_get_contents('https://books.toscrape.com/');

$doc = new DOMDocument();

libxml_use_internal_errors(true);

$doc->loadHTML($html);

libxml_clear_errors();

$xpath = new DOMXPath($doc);

// Convert any CSS selector to its XPath equivalent

$converter = new CssSelectorConverter();

$xpathQuery = $converter->toXPath('article.product_pod h3 a');

$nodes = $xpath->query($xpathQuery);

foreach ($nodes as $node) {

echo $node->getAttribute('title') . "\n";

}The converter handles CSS Selectors Level 3 (tag, class, ID, attribute, combinator, and structural pseudo-class selectors). It does not support Selectors Level 4 additions like :has(), :is(), or :where(). See the library support table above for a full comparison.

Handling dynamic classes and fragile selectors

Modern frontend frameworks (React, Vue, Angular) and CSS-in-JS libraries like styled-components generate class names that change on every build. The class price_a1b2c3 today becomes price_x7y8z9 after the next deployment. Your scraper silently returns empty results.

Here’s what these dynamic class names look like in practice across different frameworks:

| Framework / Tool | Class name pattern | Example |

|---|---|---|

| CSS Modules (React/Vue) | ComponentName_className_hash | ProductCard_price_a1b2c3 |

| styled-components | sc-randomHash + randomHash | sc-dkzDqe gYvMKx |

| Tailwind (JIT) | Utility classes (stable) | text-lg font-bold mt-4 |

| Angular | _ngcontent-xyz-123 host attribute | <div _ngcontent-app-c42> |

| Vue (scoped CSS) | data-v-hash attribute | <p data-v-7ba5bd90> |

Tailwind sites are actually scraper-friendly because utility classes are deterministic. The rest generate random hashes that change on every build.

Three strategies I use to write selectors that survive class name changes:

1. Partial attribute matching:

# Fragile - breaks when the hash suffix changes:

soup.select(".ProductCard_price_a1b2c3")

# Resilient - matches any class containing "price":

soup.select('[class*="price"]')

# Even better - match the semantic prefix:

soup.select('[class^="ProductCard_price"]')

# Be careful: [class*="price"] also matches "no-price", "priceless", etc.

# Use [class*="price_"] or [class^="price"] for tighter matching when possible.2. Target data attributes:

Many sites add data-* attributes for testing or analytics. These are intentional and rarely change between deploys.

# Stable: data attributes are added on purpose and maintained

soup.select('[data-testid="product-price"]')

soup.select('[data-product-id]')3. Structural selectors as a last resort:

# When classes are completely random, fall back to page structure

soup.select("main > section:nth-child(2) article h3")Structural selectors are fragile. They break when the layout changes. I treat them as a last resort and always add a length check to catch silent failures:

results = soup.select("main > section:nth-child(2) article h3")

if len(results) == 0:

raise ValueError("Selector returned no results - page structure may have changed")If a site’s selectors are so dynamic that none of these approaches work reliably, the problem is usually the anti-bot layer rather than the selectors themselves. HasData’s Web Scraping API handles JavaScript rendering and anti-bot evasion, returning fully rendered HTML where your selectors only need to match the data structure, not fight the obfuscation.

CSS selectors for automation (Selenium & Playwright)

CSS selectors in Selenium (Python)

Selenium locates elements with By.CSS_SELECTOR. The selector syntax is identical to what you’d write in a browser console or BeautifulSoup. The only difference is the API wrapper.

from selenium import webdriver

from selenium.webdriver.common.by import By

driver = webdriver.Chrome()

driver.get("https://quotes.toscrape.com/")

# find_elements returns a list of all matches

quotes = driver.find_elements(By.CSS_SELECTOR, "span.text")

for quote in quotes[:3]:

print(quote.text)

# Attribute selector to find the login link

login = driver.find_element(By.CSS_SELECTOR, 'a[href="/login"]')

print(f"Login URL: {login.get_attribute('href')}")

driver.quit()"The world as we have created it is a process of our thinking..."

"It is our choices, Harry, that show what we truly are..."

"There are only two ways to live your life..."

Login URL: https://quotes.toscrape.com/loginfind_element (singular) doesn’t wait for the element to appear. It throws NoSuchElementException immediately if the element isn’t in the DOM yet. For pages with dynamic content, combine your CSS selector with an explicit wait:

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

# Wait up to 10 seconds for at least one matching element to appear

element = WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.CSS_SELECTOR, "span.text"))

)CSS selectors in Playwright (Python)

Playwright’s locator() API is shorter than Selenium’s find_element(By.CSS_SELECTOR, ...) (no By constant needed), and it handles auto-waiting out of the box.

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://quotes.toscrape.com/")

# locator() accepts CSS selectors directly

quotes = page.locator("span.text").all()

for quote in quotes[:3]:

print(quote.inner_text())

# Attribute selector - same syntax as browser CSS

login_href = page.locator('a[href="/login"]').get_attribute("href")

print(f"Login path: {login_href}")

browser.close()"The world as we have created it is a process of our thinking..."

"It is our choices, Harry, that show what we truly are..."

"There are only two ways to live your life..."

Login path: /loginSince Playwright runs a real browser engine, it supports every Selectors Level 4 pseudo-class natively. Here’s :has() in action, selecting only the product cards that have an “In stock” badge:

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch()

page = browser.new_page()

page.goto("https://books.toscrape.com/")

# :has() selects parent elements based on children

in_stock_books = page.locator("article.product_pod:has(.availability .icon-ok)")

print(f"Books in stock: {in_stock_books.count()}")

# Combine :has() with other selectors to narrow results

cheap_in_stock = page.locator(

"article.product_pod:has(.availability .icon-ok) .price_color"

)

for i in range(min(3, cheap_in_stock.count())):

print(cheap_in_stock.nth(i).inner_text())

browser.close()Books in stock: 20

£51.77

£53.74

£50.10Playwright can chain different selector engines in a single query. The older >> syntax (css=div.container >> text=Submit) still works, but current Playwright docs (1.40+) prefer .locator().locator() chaining: page.locator("div.container").locator("text=Submit"). Both approaches work across Shadow DOM boundaries. Selenium can access shadow roots via the shadow_root property, but not through CSS selector piercing. BeautifulSoup doesn’t see Shadow DOM at all since it only parses the initial HTML.

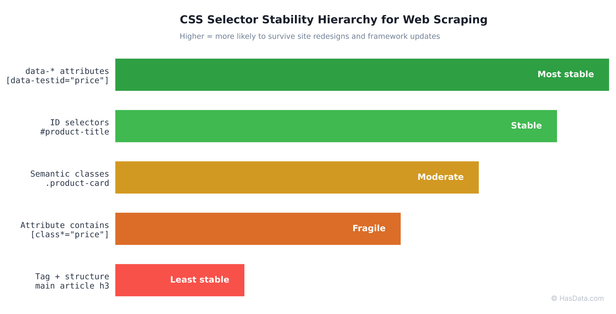

Best practices for stable selectors

After maintaining scrapers across e-commerce catalogs, real estate listings, and job boards, I follow this selector hierarchy, ordered from most stable to least:

| Priority | Selector type | Example | Why it’s stable |

|---|---|---|---|

| 1 | Data attributes | [data-testid="price"] | Added intentionally by developers, rarely change |

| 2 | ID | #product-title | Unique per page, fast browser lookup |

| 3 | Semantic class | .product-card | Meaningful names signal intentional use |

| 4 | Attribute contains | [class*="price"] | Survives hash-based class name changes |

| 5 | Tag + structure | main article h3 | Last resort, layout changes break it |

Avoid :nth-child() in production scrapers unless the data is inherently ordered (like table rows or ranked lists). Layout changes shift child indices and silently return the wrong data. That’s worse than returning nothing because you won’t notice until downstream processing breaks.

Conclusion

CSS selectors cover the majority of data extraction tasks in web scraping and test automation. The five selector categories in this cheat sheet (basic, combinator, attribute, pseudo-class, pseudo-element) handle everything from simple class matching to complex parent selection with :has(). The key to writing scrapers that last is choosing selectors by stability: data-* attributes and semantic class names first, structural selectors only as a last resort.

When CSS selectors fall short (text-based matching, upward DOM traversal, complex conditionals), XPath fills the gap.

FAQ

Q: What are the 5 main types of CSS selectors?

Basic selectors (universal *, type, class, ID), combinator selectors (descendant, child, sibling), attribute selectors ([attr=val]), pseudo-class selectors (:hover, :nth-child, :has), and pseudo-element selectors (::before, ::after). Each category targets elements by name, relationship, attribute value, state, or sub-element.

Q: Why use CSS selectors in Selenium instead of XPath?

- Speed: browsers have optimized CSS matching engines built into their rendering pipeline, making CSS selectors faster than XPath in Chrome and Firefox

- Readability:

div.product > h3is shorter and cleaner than//div[@class='product']/h3 - When to use XPath instead: text-based matching (

contains(text(), 'Price')) and upward DOM traversal, which CSS cannot do without:has()

Q: How do I find a CSS selector in Chrome DevTools?

Right-click any element and select Inspect to open the Elements panel. You can right-click the highlighted node and choose Copy → Copy selector, but this generates fragile positional selectors. Instead, examine the element’s tag, classes, and data-* attributes, then write your own. Test it in the Console by typing $$("your-selector") to see all matches.

Q: Can CSS selectors select a parent element?

Yes. The :has() pseudo-class (Selectors Level 4, 2022) enables parent selection. div:has(> span.price) matches any <div> directly containing a <span> with class price. Browser support is universal (Chrome 105+, Firefox 121+, Safari 15.4+). In Python, both BeautifulSoup 4.7+ (via soupsieve) and Scrapy’s parsel (via cssselect 1.2.0+) support it. Limitation: cssselect cannot nest :has() inside :has() or :not() inside :has().